Deep learning

Deep learning models can be seen as a subset of machine learning models, typically based on artificial neural networks. Using deep learning models for point cloud processing often demands top-level hardware. Users interested in these models are strongly encouraged to have a computer with no less than \(128\,\mathrm{GB}\) of RAM, a manycore processor (with many real cores for efficient parallel processing), and a top-level coprocessor like a GPU or a TPU. It is worth mentioning that training deep learning models for dense point clouds is not feasible with a typical CPU, so the coprocessor is a must. However, using an already trained deep learning model might be possible without a coprocessor, provided the system has a top-level CPU and high amounts of RAM.

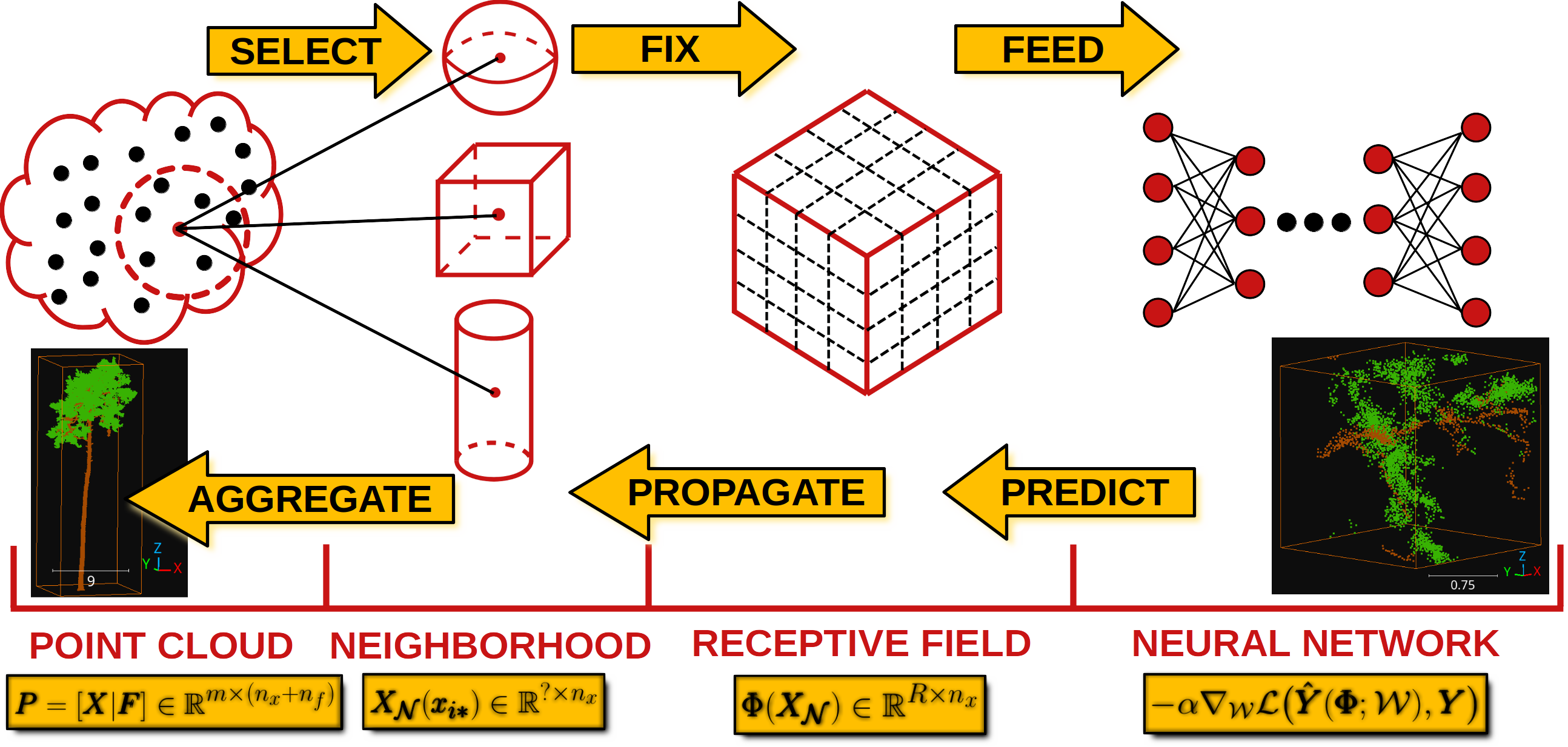

The deep learning models in the VL3D framework are based on the strategy represented in the figure below. First, it is necessary to select a set of neighborhoods that represents the input point cloud. These can overlap between themselves, i.e., the same point can be in more than one neighborhood. The neighborhoods can be defined as spheres, voxels, cylinders, or many more. Now, note that each neighborhood can contain a different number of points. In the VL3D framework, the input neighborhoods must be transformed into fixed-size representations (in terms of the number of points) that will be later grouped into batches to be fed into the neural network.

Once the neural network has computed the output, it will be propagated back from the fixed-size receptive fields to the original neighborhoods, for example, through a nearest-neighbor strategy. As there might be many outputs for the same point, the values in the neighborhoods are aggregated (also reduced), so there is one final value per point in the original point cloud (provided that the input neighborhoods cover the entire point cloud).

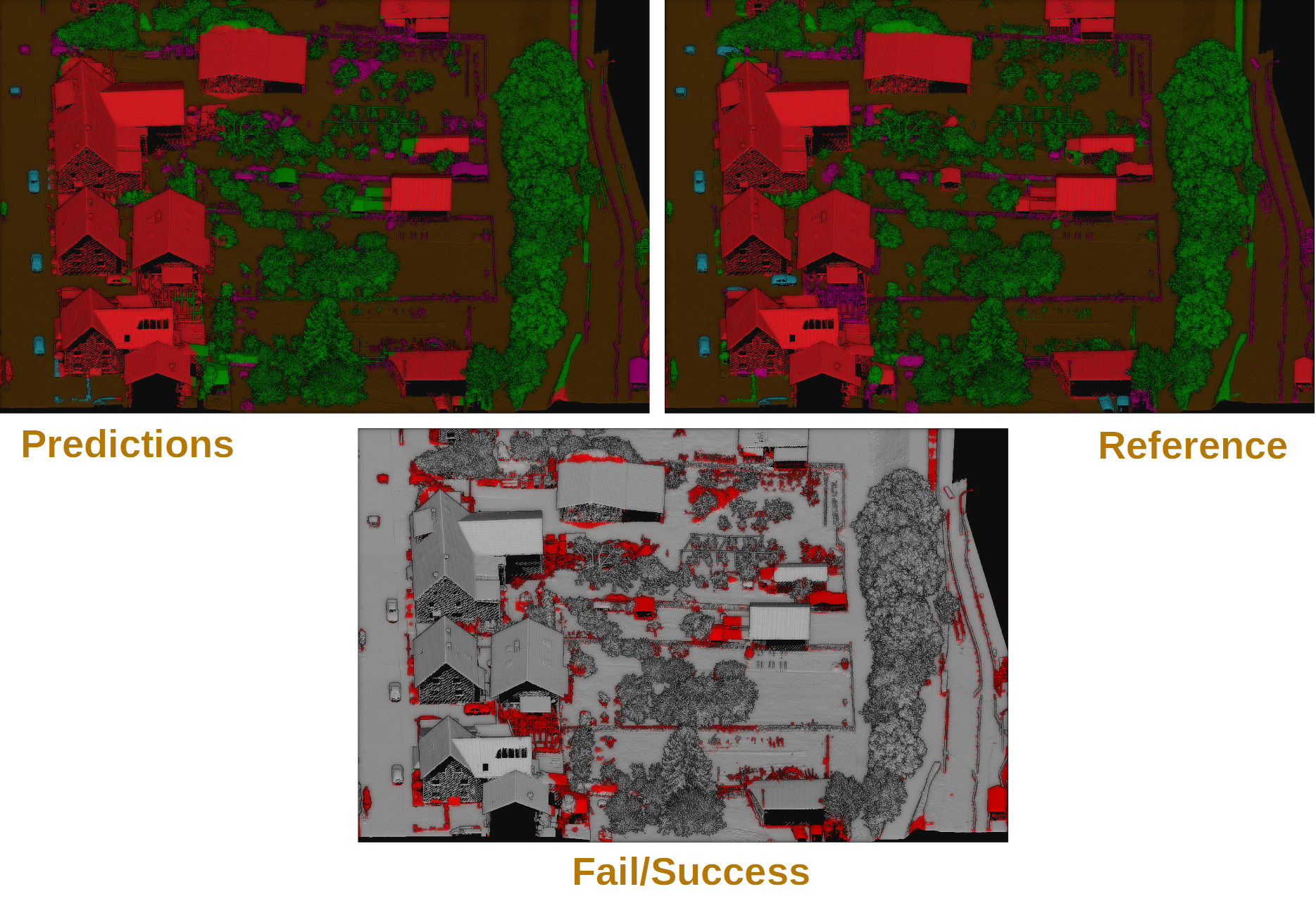

Visualization of the deep learning strategy used by the VL3D framework.

The VL3D framework uses Keras and TensorFlow as the deep learning backend. The usage of deep learning models is documented below. However, for this documentation users are expected to be already familiar with the framework, especially with how to define pipelines. If that is not the case, we strongly encourage you to read the documentation about pipelines before.

Models

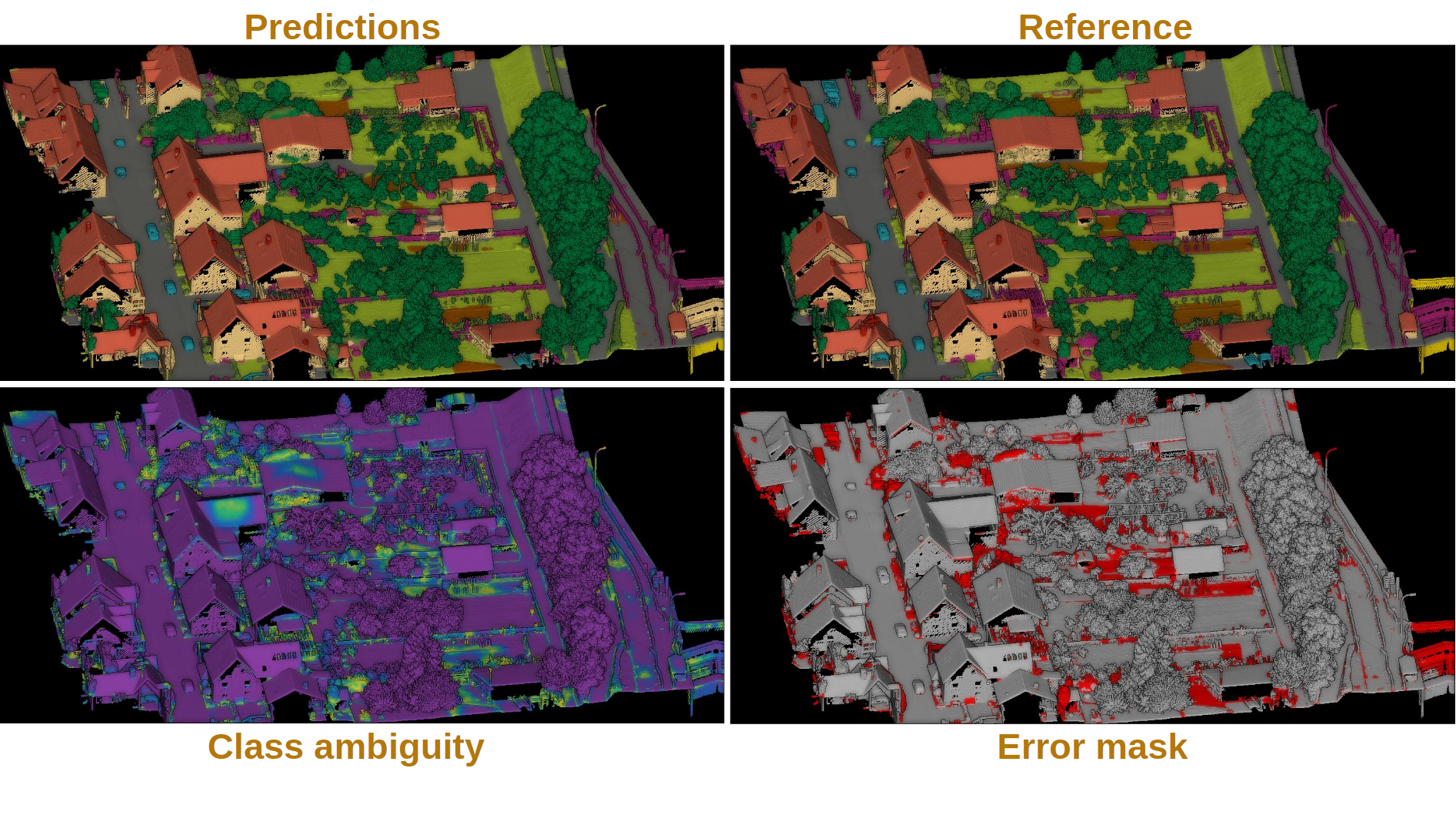

PointNet-based point-wise classifier

The PointNetPwiseClassif can be used to solve point-wise classification

tasks. This model is based on the PointNet architecture and it can be defined

as shown in the JSON below:

{

"train": "PointNetPwiseClassifier",

"fnames": ["AUTO"],

"training_type": "base",

"random_seed": null,

"model_args": {

"num_classes": 5,

"class_names": ["Ground", "Vegetation", "Building", "Urban furniture", "Vehicle"],

"num_pwise_feats": 16,

"pre_processing": {

"pre_processor": "furthest_point_subsampling",

"to_unit_sphere": false,

"support_strategy": "grid",

"support_chunk_size": 2000,

"support_strategy_fast": false,

"_training_class_distribution": [1000, 1000, 1000, 1000, 1000],

"center_on_pcloud": true,

"num_points": 4096,

"num_encoding_neighbors": 1,

"fast": false,

"neighborhood": {

"type": "rectangular3D",

"radius": 5.0,

"separation_factor": 0.8

},

"nthreads": 12,

"training_receptive_fields_distribution_report_path": "*/training_eval/training_receptive_fields_distribution.log",

"training_receptive_fields_distribution_plot_path": "*/training_eval/training_receptive_fields_distribution.svg",

"training_receptive_fields_dir": "*/training_eval/training_receptive_fields/",

"receptive_fields_distribution_report_path": "*/training_eval/receptive_fields_distribution.log",

"receptive_fields_distribution_plot_path": "*/training_eval/receptive_fields_distribution.svg",

"receptive_fields_dir": "*/training_eval/receptive_fields/",

"training_support_points_report_path": "*/training_eval/training_support_points.las",

"support_points_report_path": "*/training_eval/support_points.las"

},

"kernel_initializer": "he_normal",

"pretransf_feats_spec": [

{

"filters": 32,

"name": "prefeats32_A"

},

{

"filters": 32,

"name": "prefeats_32B"

},

{

"filters": 64,

"name": "prefeats_64"

},

{

"filters": 128,

"name": "prefeats_128"

}

],

"postransf_feats_spec": [

{

"filters": 128,

"name": "posfeats_128"

},

{

"filters": 256,

"name": "posfeats_256"

},

{

"filters": 64,

"name": "posfeats_end_64"

}

],

"tnet_pre_filters_spec": [32, 64, 128],

"tnet_post_filters_spec": [128, 64, 32],

"final_shared_mlps": [512, 256, 128],

"skip_link_features_X": false,

"include_pretransf_feats_X": false,

"include_transf_feats_X": true,

"include_postransf_feats_X": false,

"include_global_feats_X": true,

"skip_link_features_F": false,

"include_pretransf_feats_F": false,

"include_transf_feats_F": true,

"include_postransf_feats_F": false,

"include_global_feats_F": true,

"model_handling": {

"summary_report_path": "*/model_summary.log",

"training_history_dir": "*/training_eval/history",

"class_weight": [0.25, 0.5, 0.5, 1, 1],

"training_epochs": 200,

"batch_size": 16,

"checkpoint_path": "*/checkpoint.weights.h5",

"checkpoint_monitor": "loss",

"learning_rate_on_plateau": {

"monitor": "loss",

"mode": "min",

"factor": 0.1,

"patience": 2000,

"cooldown": 5,

"min_delta": 0.01,

"min_lr": 1e-6

},

"early_stopping": {

"monitor": "loss",

"mode": "min",

"min_delta": 0.01,

"patience": 5000

},

"prediction_reducer": {

"reduce_strategy" : {

"type": "MeanPredReduceStrategy"

},

"select_strategy": {

"type": "ArgMaxPredSelectStrategy"

}

}

},

"compilation_args": {

"optimizer": {

"algorithm": "SGD",

"learning_rate": {

"schedule": "exponential_decay",

"schedule_args": {

"initial_learning_rate": 1e-2,

"decay_steps": 2000,

"decay_rate": 0.96,

"staircase": false

}

}

},

"loss": {

"function": "class_weighted_categorical_crossentropy"

},

"metrics": [

"categorical_accuracy"

]

},

"architecture_graph_path": "*/model_graph.png",

"architecture_graph_args": {

"show_shapes": true,

"show_dtype": true,

"show_layer_names": true,

"rankdir": "TB",

"expand_nested": true,

"dpi": 300,

"show_layer_activations": true

}

},

"training_evaluation_metrics": ["OA", "P", "R", "F1", "IoU", "wP", "wR", "wF1", "wIoU", "MCC", "Kappa"],

"training_class_evaluation_metrics": ["P", "R", "F1", "IoU"],

"training_evaluation_report_path": "*/training_eval/evaluation.log",

"training_class_evaluation_report_path": "*/training_eval/class_evaluation.log",

"training_confusion_matrix_report_path": "*/training_eval/confusion.log",

"training_confusion_matrix_plot_path": "*/training_eval/confusion.svg",

"training_class_distribution_report_path": "*/training_eval/class_distribution.log",

"training_class_distribution_plot_path": "*/training_eval/class_distribution.svg",

"training_classified_point_cloud_path": "*/training_eval/classified_point_cloud.las",

"training_activations_path": "*/training_eval/activations.las"

}

The JSON above defines a PointNetPwiseClassif that uses a

furthest point subsampling strategy with a 3D rectangular neighborhood. The

optimization algorithm to train the neural network is stochastic gradient

descent (SGD). The loss function is a categorical cross-entropy that accounts

for class weights. The class weights can be used to handle data imbalance.

Arguments

- –

fnames The names of the features that must be considered by the neural network.

- –

training_type Typically it should be

"base"for neural networks. For further details, read the training strategies section.- –

random_seed Can be used to specify an integer like seed for any randomness-based computation. Mostly to be used for reproducibility purposes. Note that the initialization of a neural network is often based on random distributions. This parameter does not affect those distributions, so it will not guarantee reproducibility for of deep learning models.

- –

model_args The model specification.

- –

fnames If the input to the model involves features, their names must be given again inside the

model_argsdictionary due to technical reasons.- –

num_classess An integer specifying the number of classes involved in the point-wise classification tasks.

- –

class_names The names of the classes involved in the classification task. Each string corresponds to the class associated to its index in the list.

- –

num_pwise_feats How many point-wise features must be computed.

- –

pre_processing How the select and fix stages of the deep learning strategy must be handled. See the receptive fields section for further details.

- –

kernel_initializer The name of the kernel initialization method. See Keras documentation on layer initializers for further details.

- –

pretransf_feats_spec A list of dictionaries where each dictionary defines a layer to be placed before the transformation block in the middle. Each dictionary must contain

filters(an integer specifying the output dimensionality of the layer) andname(a string representing the layer’s name).- –

postransf_feats_spec A list of dictionaries where each dictionary defines a layer to be placed after the transformation block in the middle. Each dictionary must contain

filters(an integer specifying the output dimensionality of the layer) andname(a string representing the layer’s name).- –

tnet_pre_filters_spec A list of integers where each integer specifies the output dimensionality of a convolutional layer placed before the global pooling.

- –

tnet_post_filters_spec A list of integers where each integer specifies the output dimensionality of a dense layer (MLP) placed after the global pooling.

- –

final_shared_mlps A list of integers where each integer specifies the output dimensionality of the shared MLP (i.e., 1D Conv with unitary window and stride). These are called final because they are applied immediately before the convolution that reduces the number of point-wise features that constitute the input of the final layer.

- –

skip_link_features_X Whether to propagate the input structure space to the final concatenation of features (True) or not (False).

- –

include_pretransf_feats_X Whether to propagate the values of the hidden layers that processed the structure space before the second transformation block to the final concatenation of features (True) or not (False).

- –

include_transf_feats_X Whether to propagate the values of the hidden layers that processed the structure space in the second transformation block to the final concatenation of features (True) or not (False).

- –

include_postransf_feats_X Whether to propagate the values of the hidden layers that processed the structure space after the second transformation block to the final concatenation of features (True) or not (False).

- –

include_global_feats_X Whether to propagate the global features derived from the structure space to the final concatenation of features (True) or not (False).

- –

skip_link_features_F Whether to propagate the input feature space to the final concatenation of features (True) or not (False).

- –

include_pretransf_feats_F Whether to propagate the values of the hidden layers that processed the feature space before the second transformation block to the final concatenation of features (True) or not (False).

- –

include_transf_feats_F Whether to propagate the values of the hidden layers that processed the feature space in the second transformation block to the final concatenation of features (True) or not (False).

- –

include_postransf_feats_F Whether to propagate the values of the hidden layers that processed the feature space after the second transformation block to the final concatenation of features (True) or not (False).

- –

include_global_feats_F Whether to propagate the global features derived from the feature space to the final concatenation of features (True) or not (False).

- –

features_structuring_layerEXPERIMENTAL Specification for the

FeaturesStructuringLayerthat uses radial basis functions to transform the features. This layer is experimental and it is not part of typical PointNet-like architectures. Users are strongly encouraged to avoid using this layer. At the moment it is experimental and should only be used for development and research purposes.

- –

- –

architecture_graph_pathPath where the plot representing the neural network’s architecture wil be exported.

- –

architecture_graph_argsArguments governing the architecture’s graph. See Keras documentation on plot_model for further details.

- –

model_handlingDefine how to handle the model, i.e., not the architecture itself but how it must be used.

- –

summary_report_pathPath where a text describing the built network’s architecture must be exported.

- –

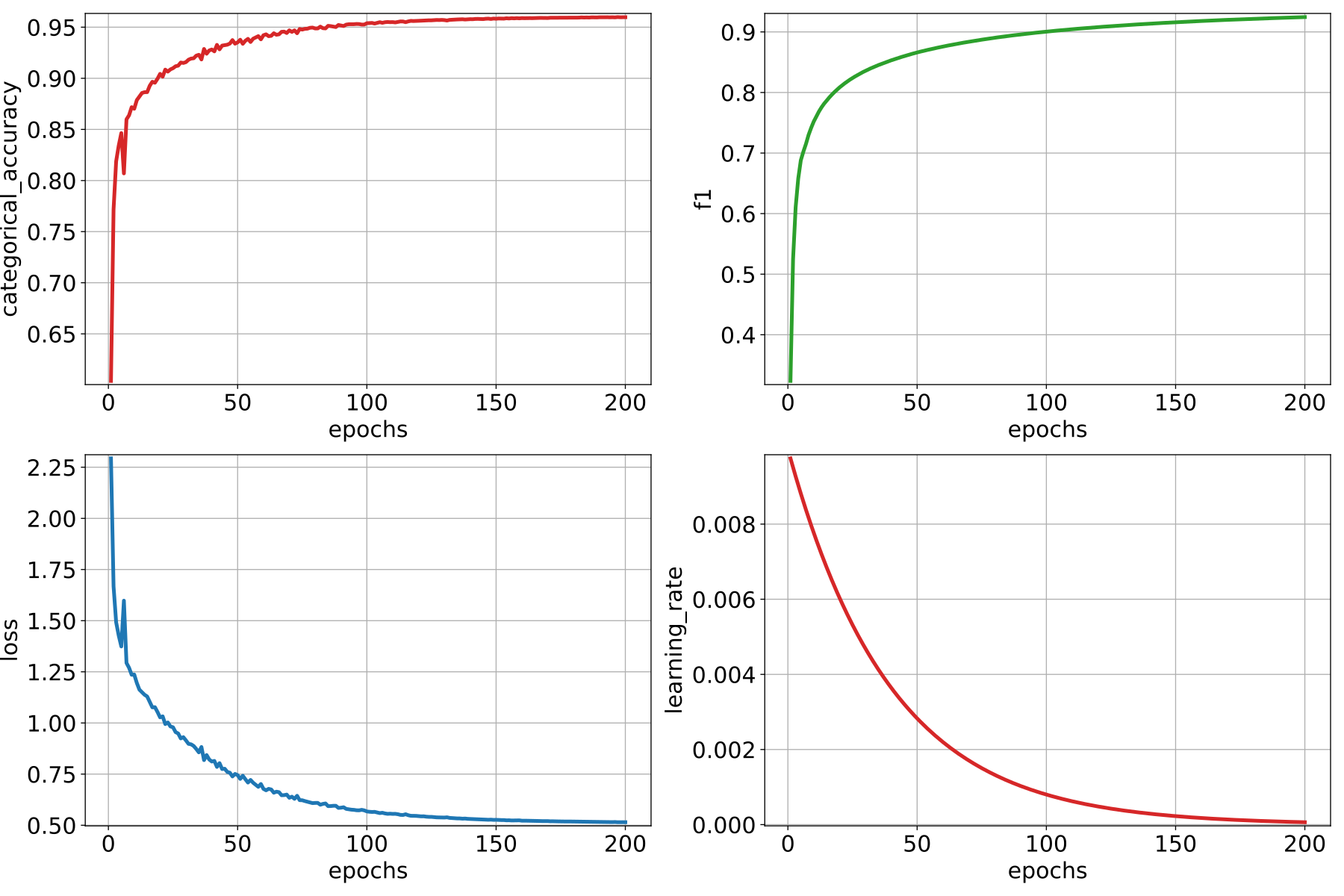

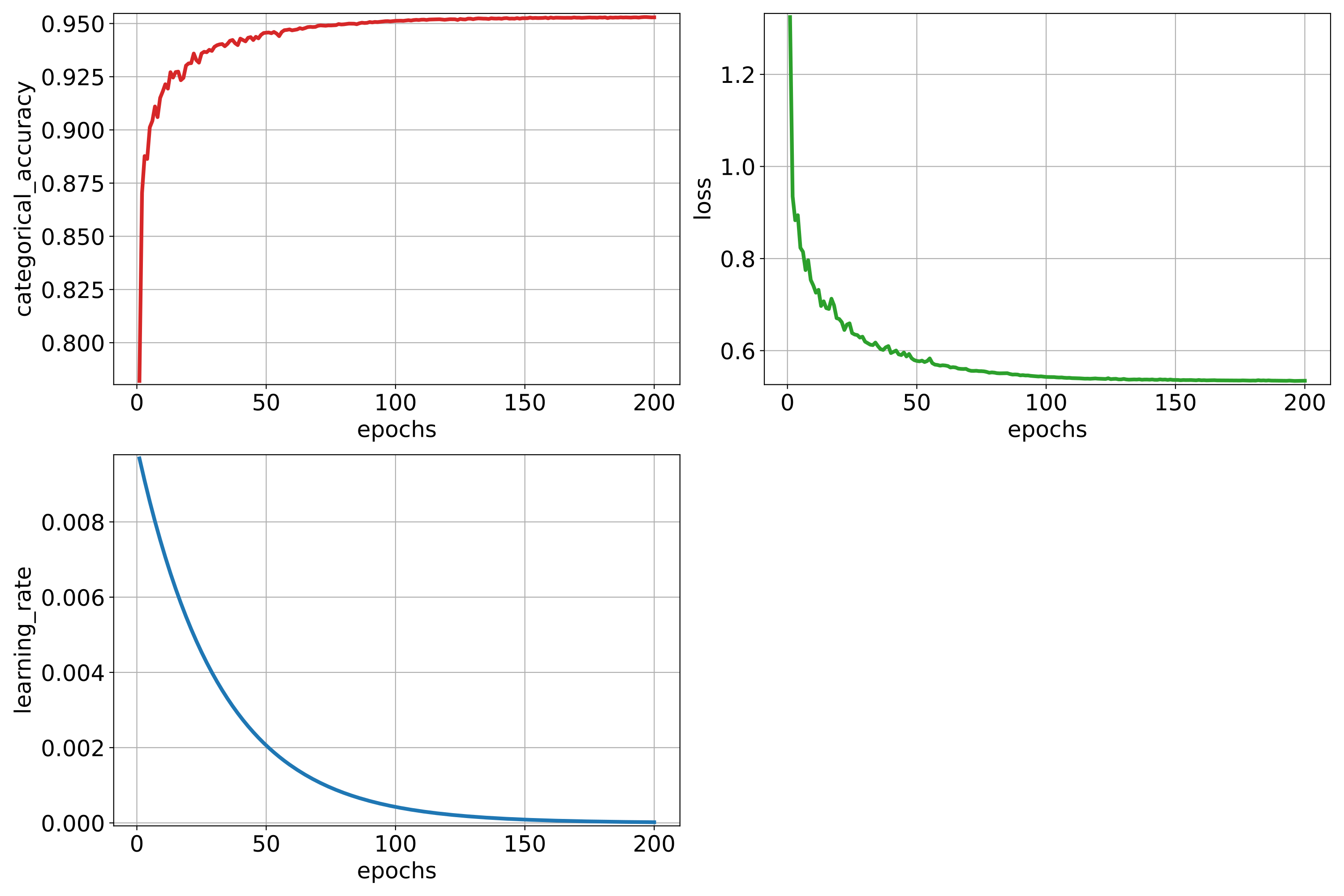

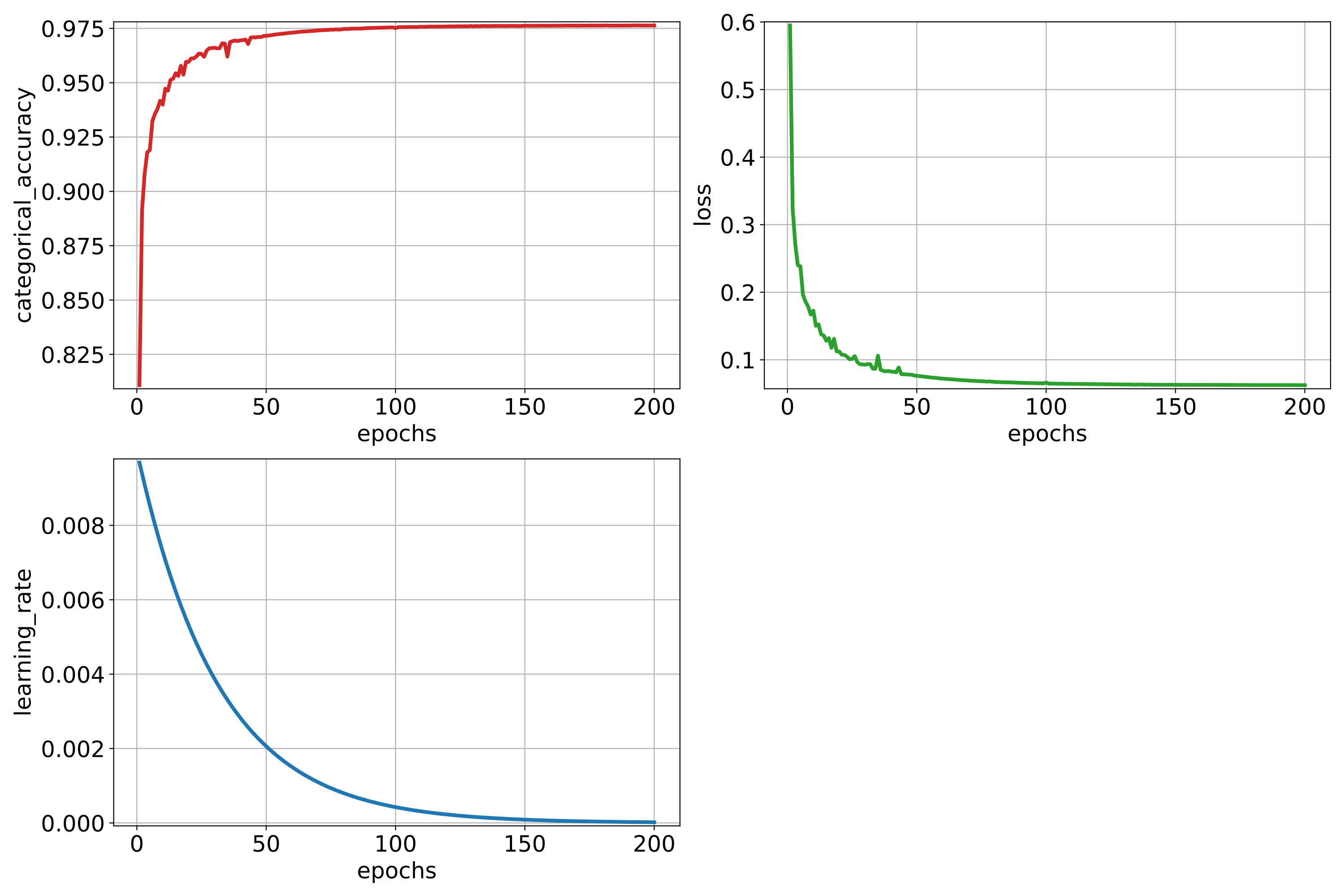

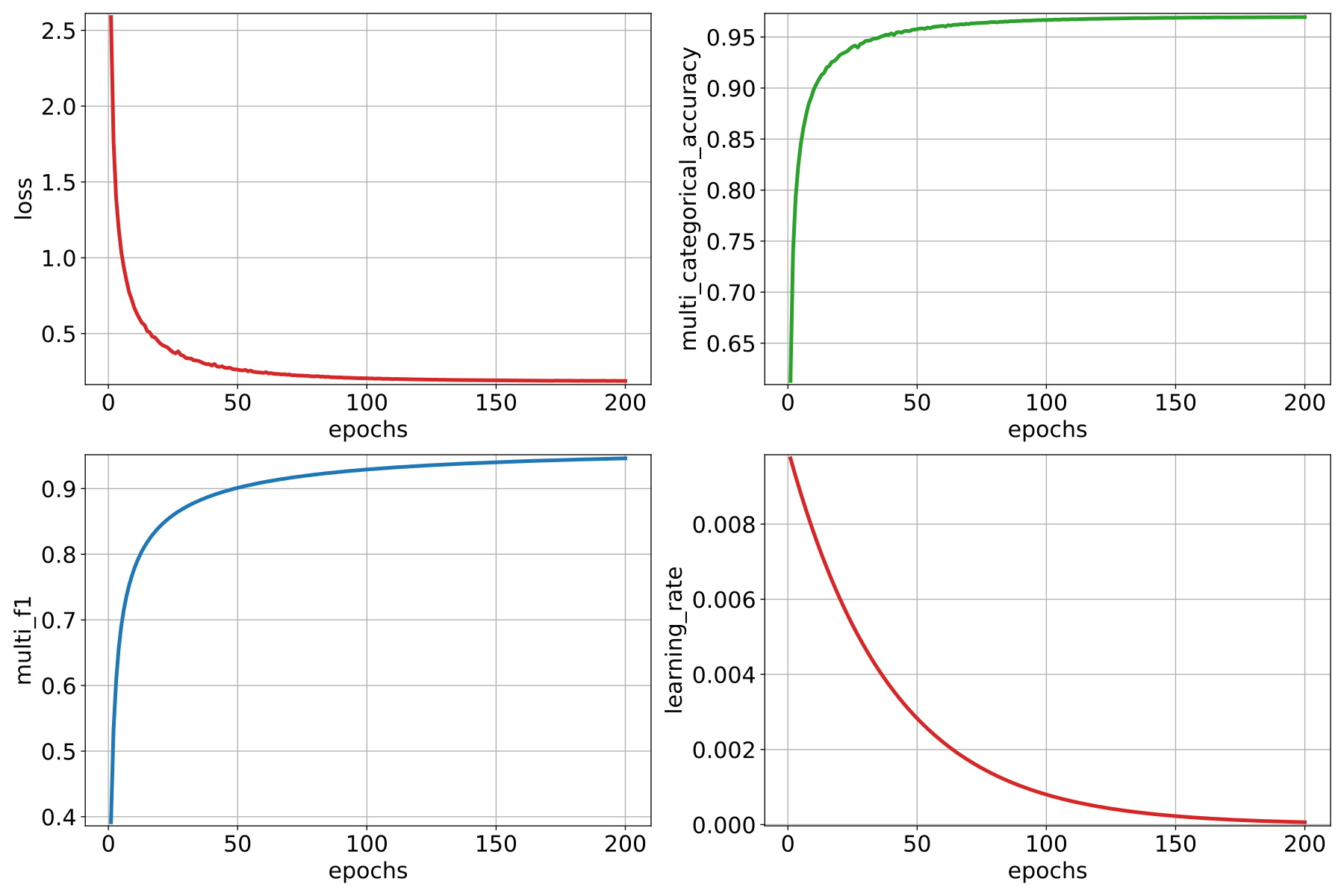

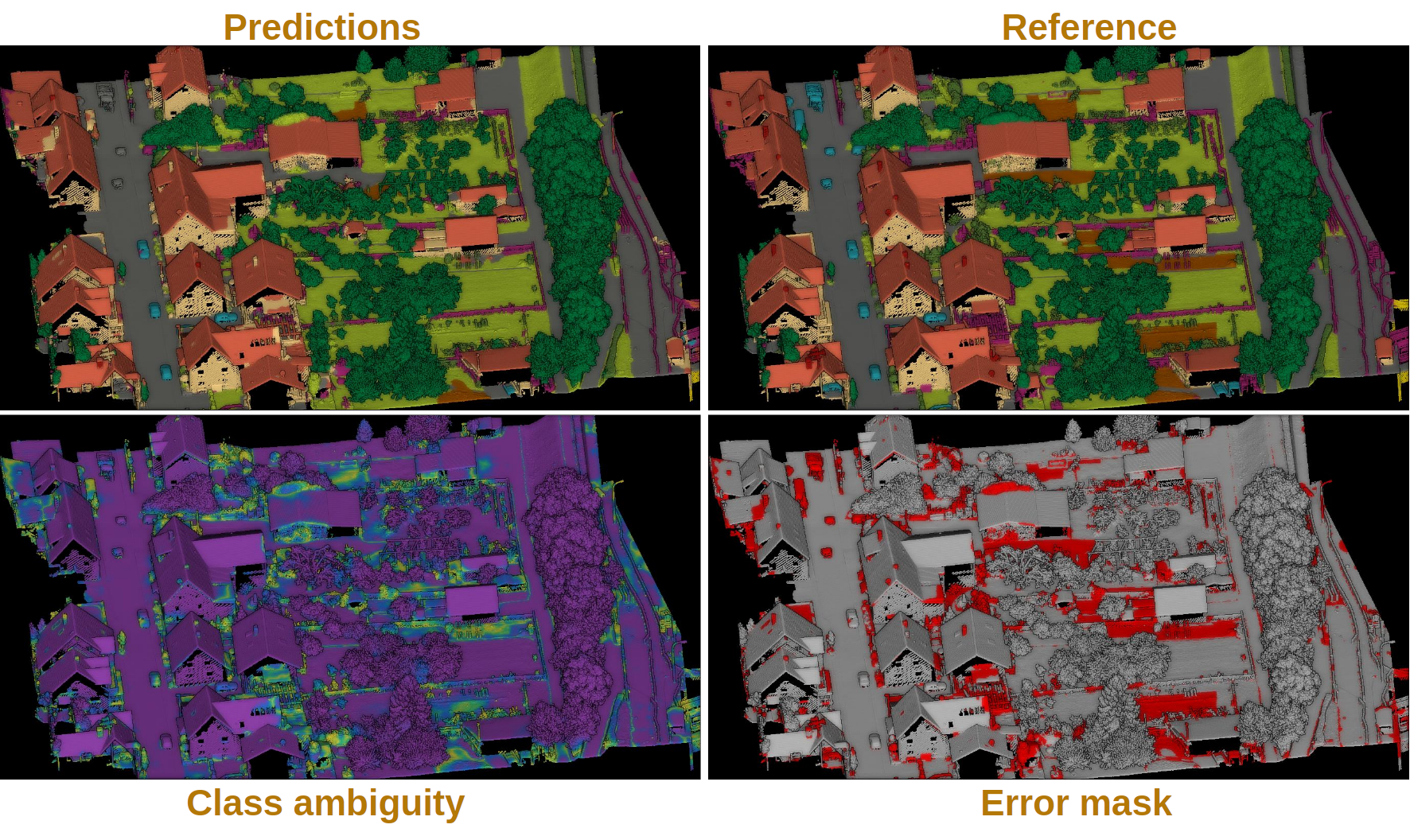

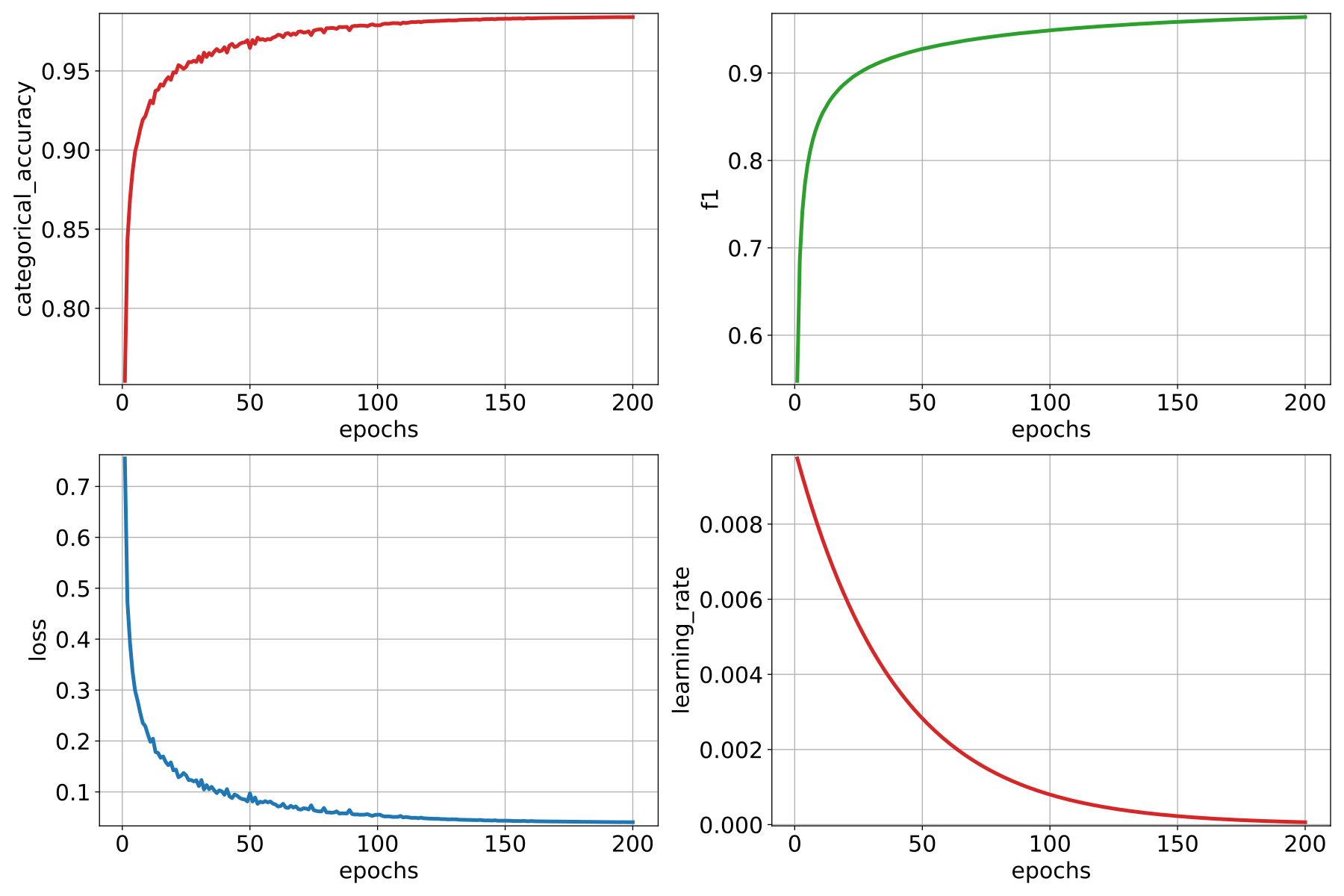

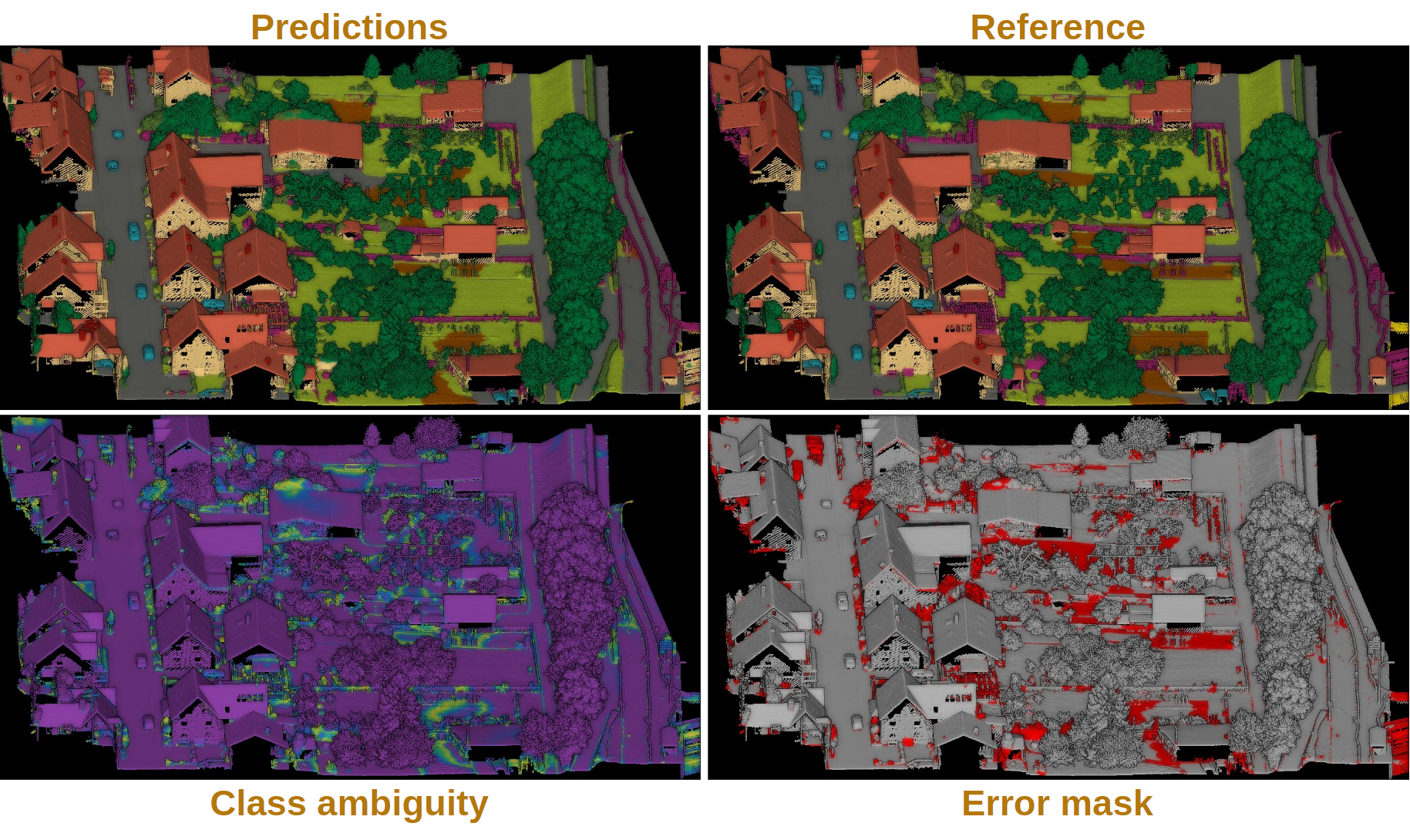

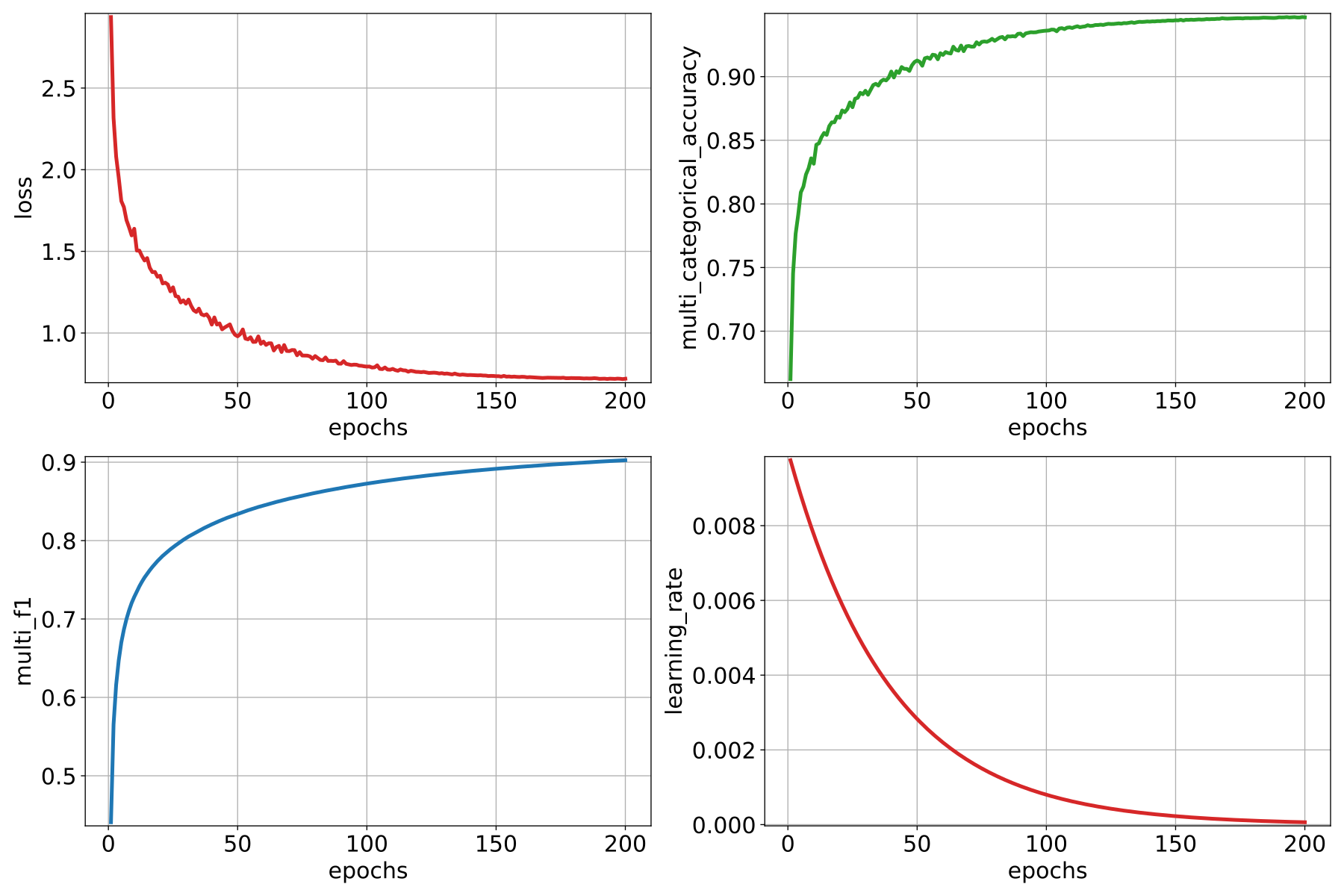

training_history_dirPath where the data (plots and text) describing the training process must be exported.

- –

class_weightThe class weights for the model’s loss. It can be

nullin which case no class weights will be considered. Alternatively, it can be"AUTO"to automatically compute the class weights based on TensorFlow’s imbalanced data tutorial. It can also be a list with as many elements as classes where each element governs the class weight for the corresponding class.- –

training_epochsHow many epochs must be considered to train the model.

- –

batch_sizeHow many receptive fields per batch must be grouped together as input for the neural network.

- –

checkpoint_pathPath where a checkpoint of the model’s current status can be exported. When given, it will be used during training to keep the best model. The extension of the file must be necessarily

".weights.h5".- –

checkpoint_monitorWhat metric must be analyzed to decide what is the best model when using the checkpoint strategy. See the Keras documentation on ModelCheckpoint for more information.

- –

learning_rate_on_plateauWhen given, it can be used to configure the learning rate on plateau callback. See the Keras documentation on ReduceLROnPlateau for more information.

- –

early_stoppingWhen given, it can be used to configure the early stopping callback. See the Keras documentation on EarlyStopping for more information.

- –

prediction_reducerCan be used to modify the default prediction reduction strategies. It is a dictionary that supports a

"reduce_strategy"specification and also a"select_strategy"specification.

- –

reduce_strategySupported types are

SumPredReduceStrategy,MeanPredReduceStrategy(default),MaxPredReduceStrategy, andEntropicPredReduceStrategy.- –

select_strategySupported types are

ArgMaxPredSelectStrategy(default).- –

fit_verboseWhether to use silent mode (0), show a progress bar (1), or print one line per epoch (2) when training a model. Alternatively,

"auto"can be used, which typically means (1).- –

predict_verboseWhether to use silent mode (0), show a progress bar (1), or print one line per epoch (2) when using a model to predict. Alternatively,

"auto"can be used, which typically means (1).

- –

compilation_argsThe arguments governing the model’s compilation. They include the optimizer, the loss function and the metrics to be monitored during training. See the optimizers section and losses section for further details.

- –

training_evaluation_metrics What metrics must be considered to evaluate the model on the training data.

"OA"Overall accuracy."P"Precision."R"Recall."F1"F1 score (harmonic mean of precision and recall)."IoU"Intersection over union (also known as Jaccard index)."wP"Weighted precision (weights by the number of true instances for each class)."wR"Weighted recall (weights by the number of true instances for each class)."wF1"Weighted F1 score (weights by the number of true instances for each class)."wIoU"Weighted intersection over union (weights by the number of true instances for each class)."MCC"Matthews correlation coefficient."Kappa"Cohen’s kappa score.

- –

training_class_evaluation_metrics What class-wose metrics must be considered to evaluate the model on the training data.

"P"Precision."R"Recall."F1"F1 score (harmonic mean of precision and recall)."IoU"Intersection over union (also known as Jaccard index).

- –

training_evaluation_report_path Path where the report about the model evaluated on the training data must be exported.

- –

training_class_evaluation_report_path Path where the report about the model’s class-wise evaluation on the training data must be exported.

- –

training_confusion_matrix_report_path Path where the confusion matrix must be exported (in text format).

- –

training_confusion_matrix_plot_path Path where the confusion matrix must be exported (in image format).

- –

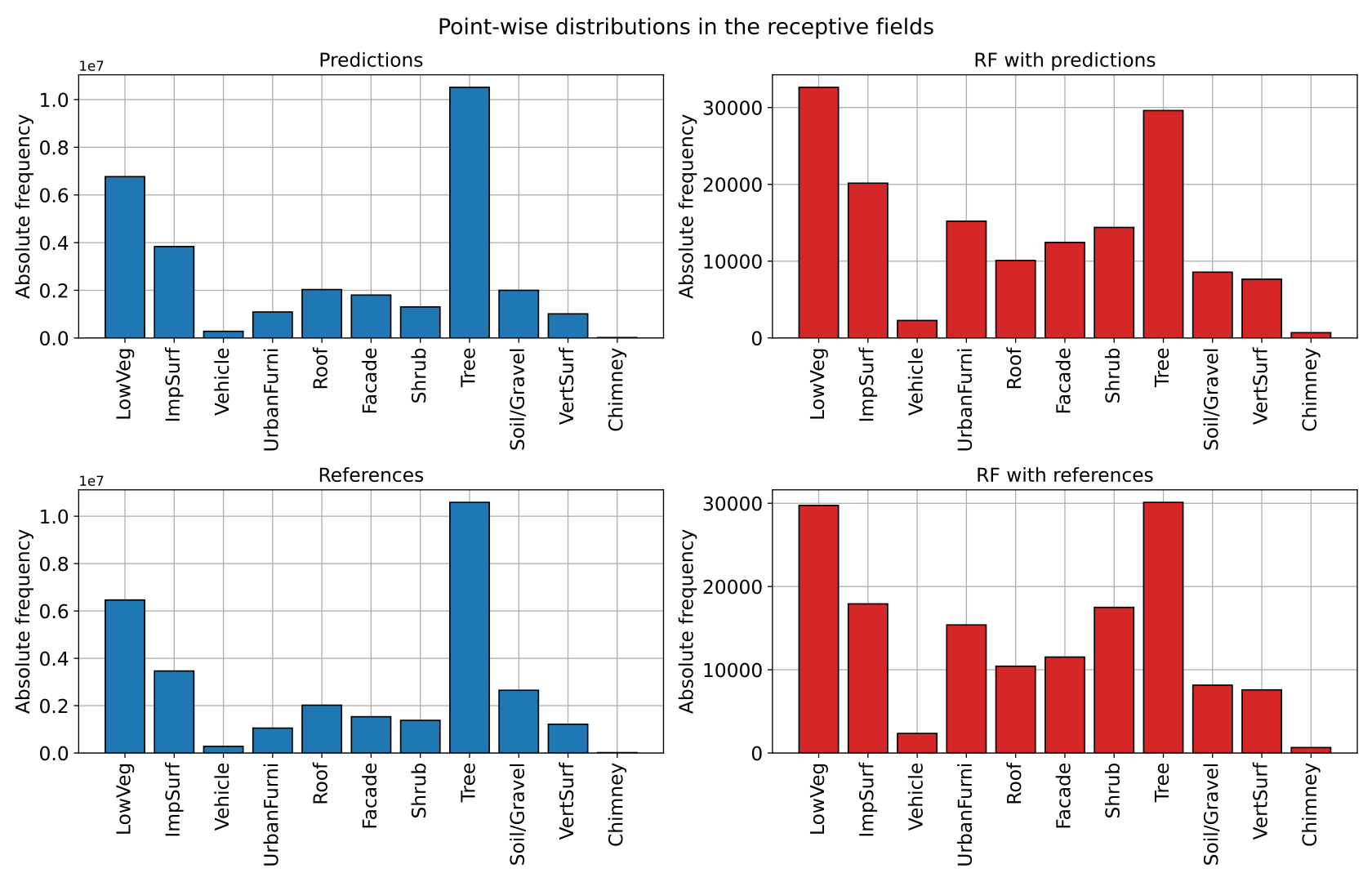

training_class_distribution_report_path Path where the analysis of the classes distribution must be exported (in text format).

- –

training_class_distribution_plot_path Path where the analysis of the classes distribution must be exported (in image format).

- –

training_classifier_point_cloud_path Path where the training data with the model’s predictions must be exported.

- –

training_activations_path Path where a point cloud representing the point-wise activations of the model must be exported. It might demand a lot of memory. However, it can be useful to understand, debug, and improve the model.

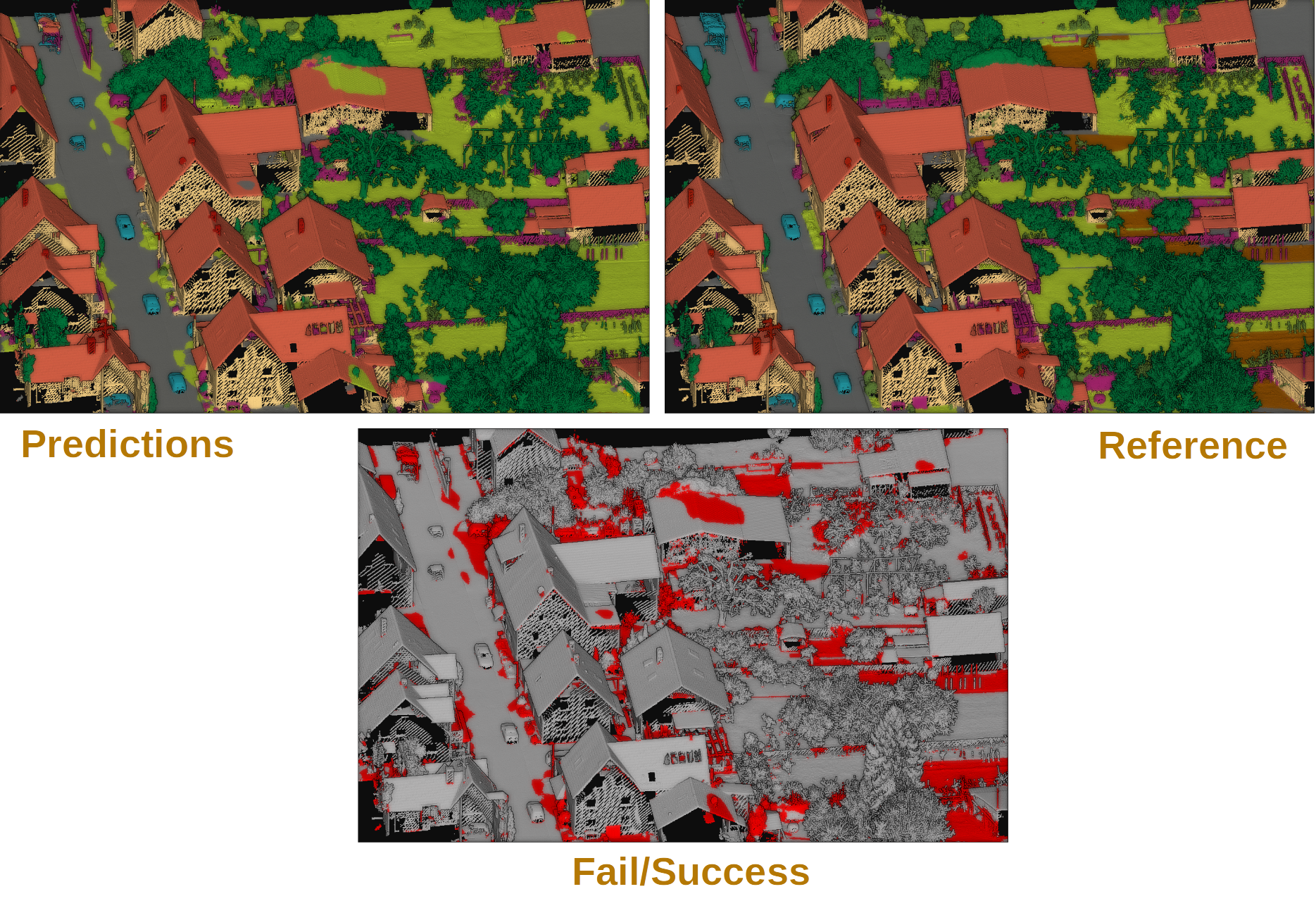

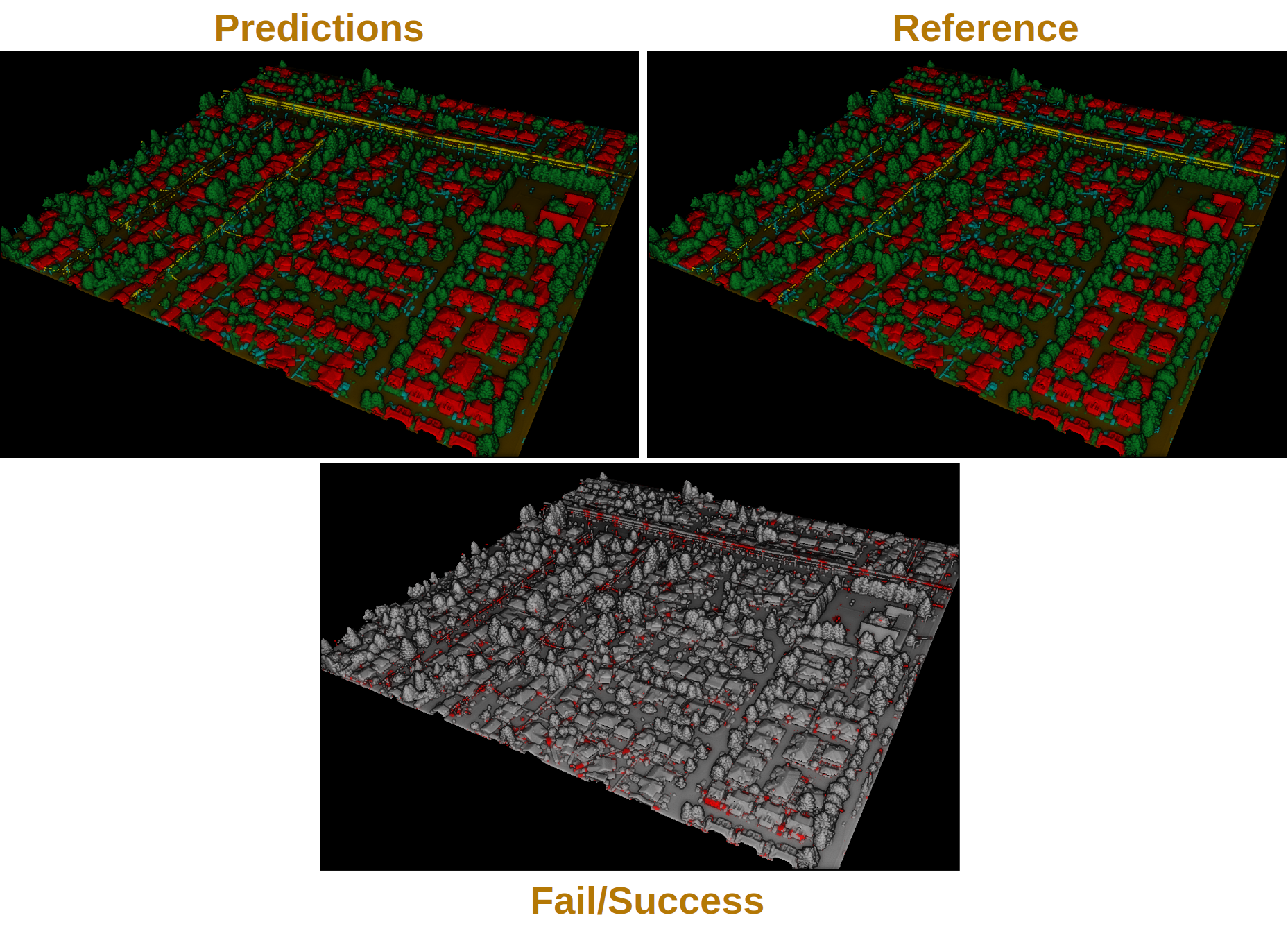

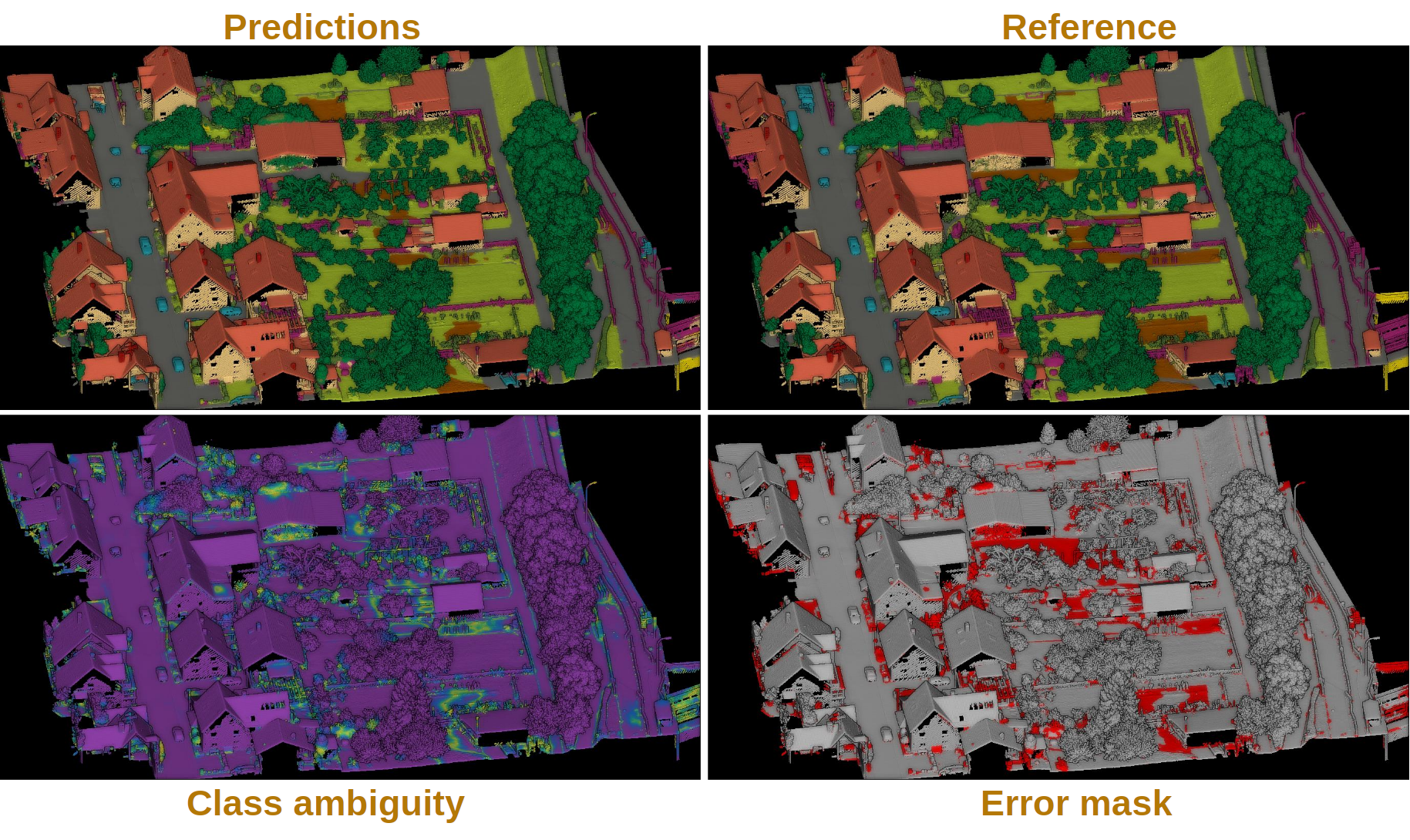

Hierarchical autoencoder point-wise classifier

Hierarchical autoencoders for point-wise classification are available in the

framework through the ConvAutoencPwiseClassif architecture. They are

also referred to in the documentation as convolutional autoencoders. In the

scientific literature they are widely known as hierarchical feature extractors

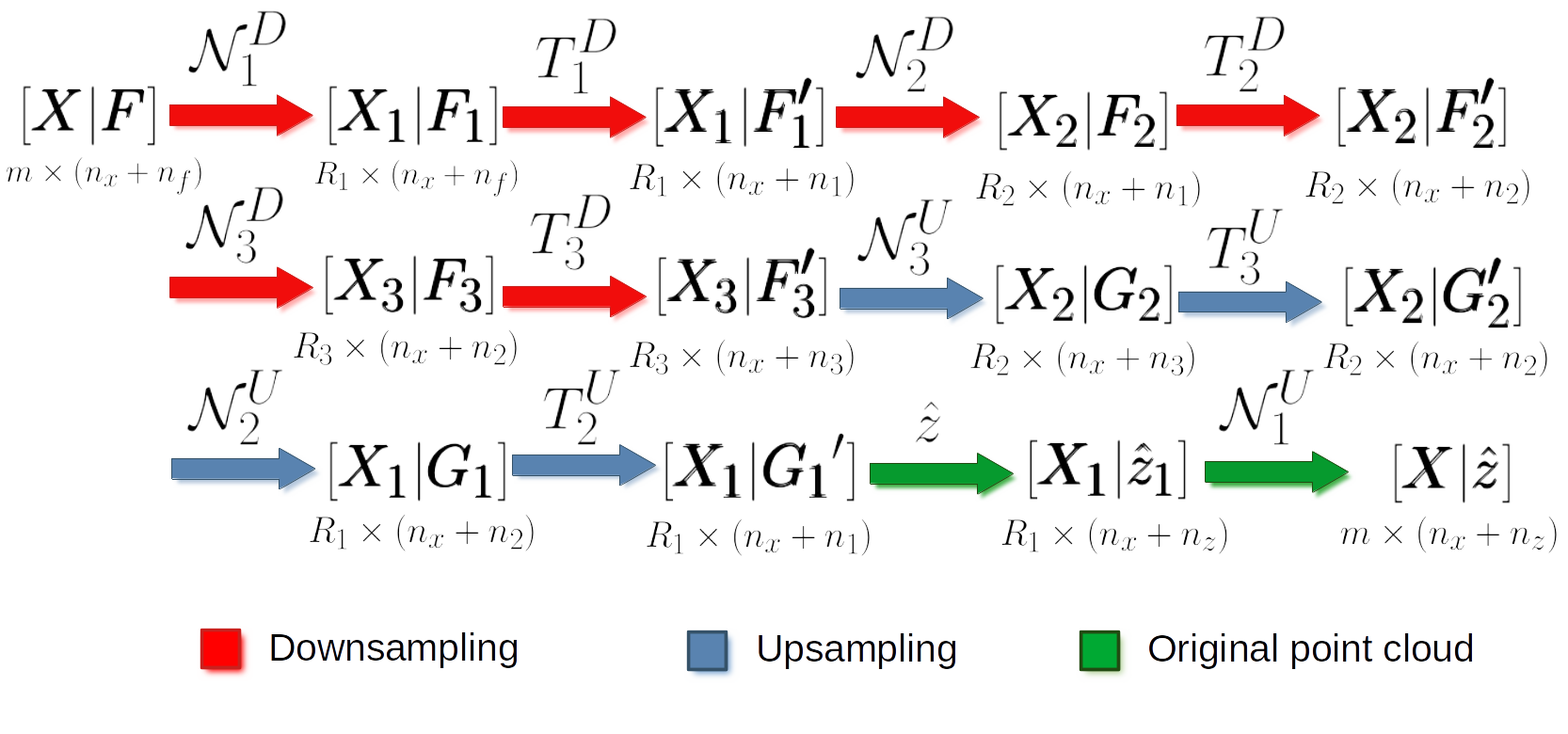

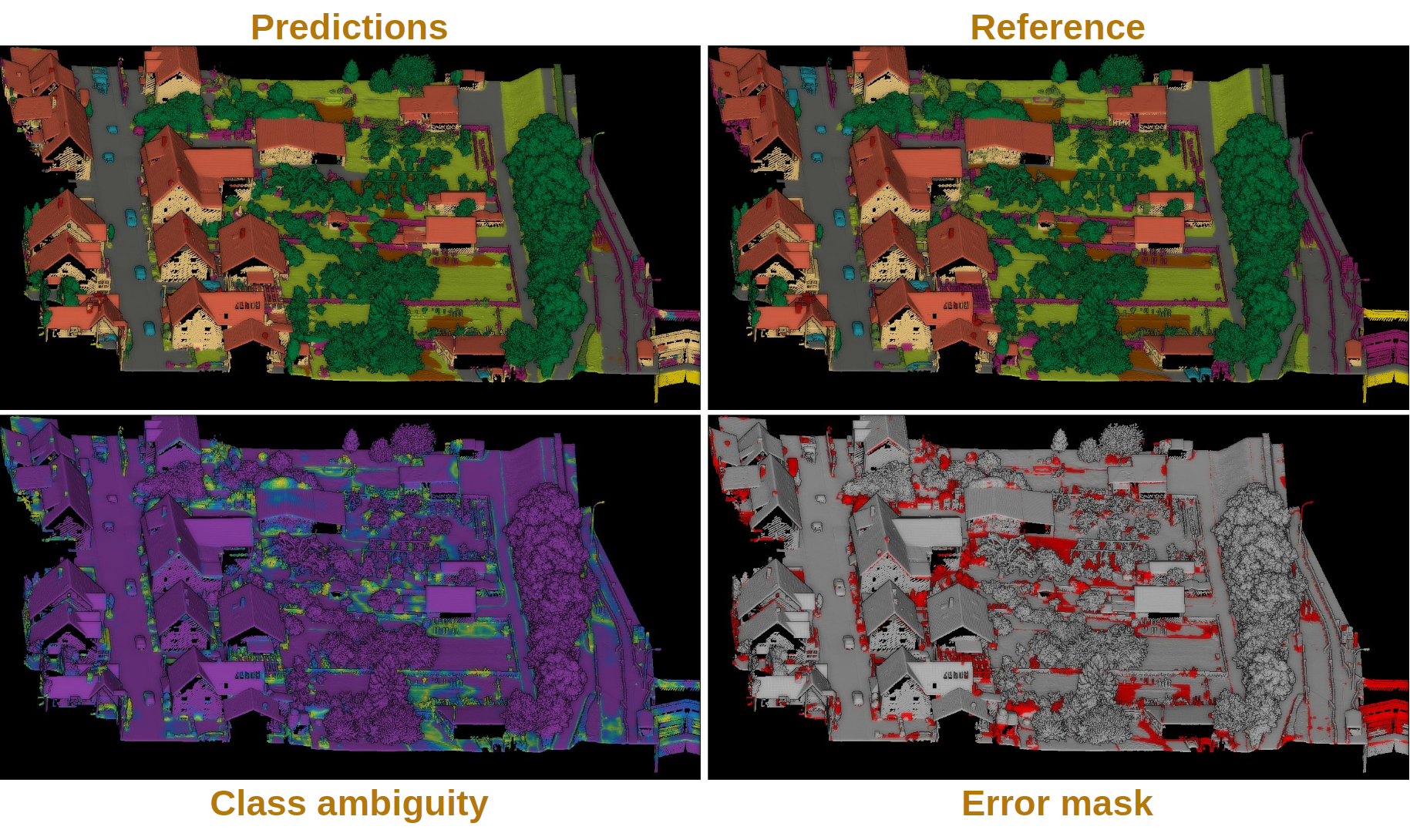

too. The figure below summarized the main logic of hierarchical autoencoders

for point clouds.

Representation of the main logic governing hierarchical autoencoders for point clouds based on hierarchical receptive fields.

Initially, we have a 3D structure space \(\pmb{X} \in \mathbb{R}^{m \times 3}\) with \(m\) points and the corresponding feature space \(\pmb{F} \in \mathbb{R}^{m \times n_f}\) with \(n_f\) features. For a given depth, for example for depth three (as illustrated in the figure above), there is a set of downsampling stages followed by a set of upsampling stages.

At a given depth \(d\), there is a non downsampled structure space \(\pmb{X_{d-1}} \in \mathbb{R}^{R_{d-1} \times 3}\) and its corresponding \(\pmb{X_{d}} \in \mathbb{R}^{R_d \times 3}\) downsampled version. The neighborhood \(\mathcal{N}_d^D\) can be represented with an indexing matrix \(\pmb{N}_{d}^{D} \in \mathbb{Z}^{R_d \times \kappa_d^D}\) that defines for each of the \(R_d\) points in the downsampled space its \(\kappa_d^D\) closest neighbors in the non downsampled space.

Once in the downsampling space, a transformation \(T_d^D\) is applied to downsampled feature space to obtain a new set of features. This transformation can be done using different operators like PointNet or Kernel Point Convolution (KPConv). Further details about them will be given below in the hierarchical feature extraction with PointNet and the hierarchical feature extraction with KPConv sections.

After finishing the downsampling and feature extraction operations, it is time to restore the original dimensionality through upsampling. First, the \(\mathcal{N}_d^U\) neighborhood is reresented by an indexing matrix \(\pmb{N}_{d}^U \in \mathbb{Z}^{R_{d-1} \times \kappa_d^U}\) that defines for each of the \(R_{d-1}\) points in the upsampled space its \(\kappa_d^U\) closest neighbors in the non upsampled space. Then, the \(T_d^U\) upsampling operation is applied. Typically, it is a SharedMLP (i.e., a unitary 1D discrete convolution).

Note that the last upsampling operation is not applied inside the neural network. Instead, the estimations of the network are computed on the first receptive field with structure space \(\pmb{X_1} \in \mathbb{R}^{R_1 \times 3}\) (the one with more points, and thus, closer to the original neighborhood). Finally, the last upsampling is computed to transform the predictions of the neural network (\(\hat{z}\)) back to the original input neighborhood (with an arbitrary number of points).

Hierarchical feature extraction with PointNet

The ConvAutoencPwiseClassif architecture can be configured with

PointNet for feature extraction operations. The downsampling strategy can be

defined through the FeaturesDownsamplingLayer, the upsampling

strategy through the FeaturesUpsamplingLayer, and the feature

extraction through the GroupingPointNetLayer. The JSON below

illustrates how to configure PointNet++-like hierarchical feature extractors

using the VL3D framework. For further details on the original PointNet++

architecture, readers are referred to

the PointNet++ paper (Qi et al., 2017)

.

{

"in_pcloud": [

"/mnt/netapp2/Store_uscciaep/lidar_data/hessigheim/data/Mar18_train.laz"

],

"out_pcloud": [

"/mnt/netapp2/Store_uscciaep/lidar_data/hessigheim/vl3d/hae_X_FPS50K/T1/*"

],

"sequential_pipeline": [

{

"train": "ConvolutionalAutoencoderPwiseClassifier",

"training_type": "base",

"fnames": ["AUTO"],

"random_seed": null,

"model_args": {

"num_classes": 11,

"class_names": ["LowVeg", "ImpSurf", "Vehicle", "UrbanFurni", "Roof", "Facade", "Shrub", "Tree", "Soil/Gravel", "VertSurf", "Chimney"],

"pre_processing": {

"pre_processor": "hierarchical_fps",

"support_strategy_num_points": 50000,

"to_unit_sphere": false,

"support_strategy": "fps",

"support_chunk_size": 2000,

"support_strategy_fast": true,

"center_on_pcloud": true,

"neighborhood": {

"type": "rectangular3D",

"radius": 3.0,

"separation_factor": 0.8

},

"num_points_per_depth": [512, 256, 128, 64, 32],

"fast_flag_per_depth": [false, false, false, false, false],

"num_downsampling_neighbors": [1, 16, 8, 8, 4],

"num_pwise_neighbors": [32, 16, 16, 8, 4],

"num_upsampling_neighbors": [1, 16, 8, 8, 4],

"nthreads": 12,

"training_receptive_fields_distribution_report_path": "*/training_eval/training_receptive_fields_distribution.log",

"training_receptive_fields_distribution_plot_path": "*/training_eval/training_receptive_fields_distribution.svg",

"training_receptive_fields_dir": null,

"receptive_fields_distribution_report_path": "*/training_eval/receptive_fields_distribution.log",

"receptive_fields_distribution_plot_path": "*/training_eval/receptive_fields_distribution.svg",

"receptive_fields_dir": null,

"training_support_points_report_path": "*/training_eval/training_support_points.las",

"support_points_report_path": "*/training_eval/support_points.las"

},

"feature_extraction": {

"type": "PointNet",

"operations_per_depth": [2, 1, 1, 1, 1],

"feature_space_dims": [64, 64, 128, 256, 512, 1024],

"bn": true,

"bn_momentum": 0.0,

"H_activation": ["relu", "relu", "relu", "relu", "relu", "relu"],

"H_initializer": ["glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform"],

"H_regularizer": [null, null, null, null, null, null],

"H_constraint": [null, null, null, null, null, null],

"gamma_activation": ["relu", "relu", "relu", "relu", "relu", "relu"],

"gamma_kernel_initializer": ["glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform"],

"gamma_kernel_regularizer": [null, null, null, null, null, null],

"gamma_kernel_constraint": [null, null, null, null, null, null],

"gamma_bias_enabled": [true, true, true, true, true, true],

"gamma_bias_initializer": ["zeros", "zeros", "zeros", "zeros", "zeros", "zeros"],

"gamma_bias_regularizer": [null, null, null, null, null, null],

"gamma_bias_constraint": [null, null, null, null, null, null]

},

"_structure_alignment": {

"tnet_pre_filters_spec": [64, 128, 256],

"tnet_post_filters_spec": [128, 64, 32],

"kernel_initializer": "glorot_normal"

},

"features_alignment": null,

"downsampling_filter": "gaussian",

"upsampling_filter": "mean",

"upsampling_bn": true,

"upsampling_momentum": 0.0,

"conv1d_kernel_initializer": "glorot_normal",

"output_kernel_initializer": "glorot_normal",

"model_handling": {

"summary_report_path": "*/model_summary.log",

"training_history_dir": "*/training_eval/history",

"features_structuring_representation_dir": "*/training_eval/feat_struct_layer/",

"class_weight": [1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1],

"training_epochs": 200,

"batch_size": 16,

"checkpoint_path": "*/checkpoint.weights.h5",

"checkpoint_monitor": "loss",

"learning_rate_on_plateau": {

"monitor": "loss",

"mode": "min",

"factor": 0.1,

"patience": 2000,

"cooldown": 5,

"min_delta": 0.01,

"min_lr": 1e-6

}

},

"compilation_args": {

"optimizer": {

"algorithm": "SGD",

"learning_rate": {

"schedule": "exponential_decay",

"schedule_args": {

"initial_learning_rate": 1e-2,

"decay_steps": 15000,

"decay_rate": 0.96,

"staircase": false

}

}

},

"loss": {

"function": "class_weighted_categorical_crossentropy"

},

"metrics": [

"categorical_accuracy"

]

},

"architecture_graph_path": "*/model_graph.png",

"architecture_graph_args": {

"show_shapes": true,

"show_dtype": true,

"show_layer_names": true,

"rankdir": "TB",

"expand_nested": true,

"dpi": 300,

"show_layer_activations": true

}

},

"autoval_metrics": ["OA", "P", "R", "F1", "IoU", "wP", "wR", "wF1", "wIoU", "MCC", "Kappa"],

"training_evaluation_metrics": ["OA", "P", "R", "F1", "IoU", "wP", "wR", "wF1", "wIoU", "MCC", "Kappa"],

"training_class_evaluation_metrics": ["P", "R", "F1", "IoU"],

"training_evaluation_report_path": "*/training_eval/evaluation.log",

"training_class_evaluation_report_path": "*/training_eval/class_evaluation.log",

"training_confusion_matrix_report_path": "*/training_eval/confusion.log",

"training_confusion_matrix_plot_path": "*/training_eval/confusion.svg",

"training_class_distribution_report_path": "*/training_eval/class_distribution.log",

"training_class_distribution_plot_path": "*/training_eval/class_distribution.svg",

"training_classified_point_cloud_path": "*/training_eval/classified_point_cloud.las",

"training_activations_path": null

},

{

"writer": "PredictivePipelineWriter",

"out_pipeline": "*pipe/HAE_T1.pipe",

"include_writer": false,

"include_imputer": false,

"include_feature_transformer": false,

"include_miner": false,

"include_class_transformer": false

}

]

}

The JSON above defines a ConvAutoencPwiseClassif that uses a

hierarchical furthest point sampling strategy with a 3D rectangular

neighborhood and the PointNet operator for feature extraction. It is expected

to work only on the structure space, i.e., the input feature space will be a

single column of ones.

Arguments

- –

training_type Typically it should be

"base"for neural networks. For further details, read the training strategies section.- –

fnames The name of the features that must be given as input to the neural network. For hierarchical autoencoders this list can contain

"ones"to specify whether to include a column of ones in the input space matrix. This architecture does not support empty feature spaces as input, thus, when no features are given, the input feature space must be represented with a column of ones.- –

random_seed Can be used to specify an integer like seed for any randomness-based computation. Mostly to be used for reproducibility purposes. Note that the initialization of a neural network is often based on random distributions. This parameter does not affect those distributions, so it will not guarantee reproducibility for of deep learning models.

- –

model_args The model specification.

- –

num_classess An integer specifying the number of classes involved in the point-wise classification tasks.

- –

class_names The names of the classes involved in the classification task. Each string corresponds to the class associated to its index in the list.

- –

pre_processing How the select and fix stages of the deep learning strategy must be handled. Note that hierarchical autoencoders demand hierarchical receptive fields. See the receptive fields and hierarchical FPS receptive field sections for further details.

- –

feature_extraction The definition of the feature extraction operator. A detailed description of the case when

"type": "PointNet"is given below. For a description of the case when"type": "KPConv"see the KPConv operator documentation.- –

operations_per_depth A list specifying how many operations per depth level. The i-th element of the list gives the number of feature extraction operations at depth i.

- –

feature_space_dims A list specifying the output dimensionality of the feature space after each feature extraction operation. The i-th element of the list gives the dimensionality of the i-th feature extraction operation.

- –

bn Boolean flag to decide whether to enable batch normalization for feature extraction.

- –

bn_momentum Momentum for the moving average of the batch normalization, such that

new_mean = old_mean * momentum + batch_mean * (1 - momentum). See the Keras documentation on batch normalization for more details.- –

H_activation The activation function for the SharedMLP of each feature extraction operation. See the keras documentation on activations for more details.

- –

H_initializer The initialization method for the SharedMLP of each feature extraction operation. See the keras documentation on initializers for more details.

- –

H_regularizer The regularization strategy for the SharedMLP of each feature extraction operation. See the keras documentation on regularizers for more details.

- –

H_constraint The constraints for the SharedMLP of each feature extraction operation. See the keras documentation on constraints for more details.

- –

gamma_activation The constraints for the MLP of each feature extraction operation. See the keras documentation on activations for more details.

- –

gamma_kernel_initializer The initialization method for the MLP of each feature extraction operation (ignoring the bias term). See the keras documentation on initializers for more details.

- –

gamma_kernel_regularizer The regularization strategy for the MLP of each feature extraction operation (ignoring the bias term). See the keras documentation on regularizers for more details.

- –

gamma_kernel_constraint The constraints for the MLP of each feature extraction operation (ignoring the bias term). See the keras documentation on constraints for more details.

- –

gamma_bias_enabled Whether to enable the bias term for the MLP of each feature extraction operation.

- –

gamma_bias_initializer The initialization method for the bias term of the MLP of each feature extraction operation. See the keras documentation on initializers for more details.

- –

gamma_bias_regularizer The regularization strategy for the bias term of the MLP of each feature extraction operation. See the keras documentation on regularizers for more details.

- –

gamma_bias_constraint The constraints for the bias term of the MLP of each feature extraction operation. See the keras documentation on constraints for more details.

- –

- –

structure_alignment When given, this specification will govern the alignment of the structure space.

- –

tnet_pre_filters_spec List defining the number of pre-transformation filters at each depth.

- –

tnet_post_filters_spec List defining the number of post-transformation filters at each depth.

- –

kernel_initializer The kernel initialization method for the structure alignment layers. See the keras documentation on initializers for more details.

- –

- –

features_alignment When given, this specification will govern the alignment of the feature space. It is like the

structure_alignmentdictionary but it is applied to the features instead of the structure space. It must be null to mimic a classical KPConv model.- –

downsampling_filter The type of downsampling filter. See

FeaturesDownsamplingLayer,StridedKPConvLayer,StridedLightKPConvLayer, andInterdimensionalPointTransformerLayerfor more details.- –

upsampling_filter The type of upsampling filter. See

FeaturesUpsamplingLayerandInterdimnsionalPointTransformerLayerfor more details.- –

upsampling_bn Boolean flag to decide whether to enable batch normalization for upsampling transformations.

- –

upsampling_momentum Momentum for the moving average of the upsampling batch normalization, such that

new_mean = old_mean * momentum + batch_mean * (1-momentum). See the Keras documentation on batch normalization for more details.- –

conv1d_kernel_initializer The initialization method for the 1D convolutions during upsampling. See the keras documentation on initializers for more details.

- –

neck The neck block that connects the feature extraction hierarchy with the segmentation head. It can be

nullif no neck is desired. If given, it must be a dictionary governing the neck block.- –

max_depth An integer specifying the depth of the neck block.

- –

hidden_channels A list with the number of hidden channels (output dimensionality) at each depth of the neck block.

- –

kernel_initializer A list with the initialization method for the layers at each depth of the neck block. See the keras documentation on initializers for more details.

- –

kernel_regularizer A list with the regularization method for the layers at each depth of the neck block. See the keras documentation on regularizers for more details.

- –

kernel_constraint A list with the constraint for the layers at each depth of the neck block. See the keras documentation on constraints for more details.

- –

bn_momentum A list with the momentum for the moving average of the batch normalization at each depth of the neck block, such that

new_mean = old_mean * momentum + batch_mean * (1 - momentum). See the Keras documentation on batch normalization for more details.- –

activation A list with the name of the activation function to be used at each depth of the neck block. These names must match those listed in the Keras documentation on activations.

- –

- –

contextual_head The specification of the contextual head to be built on top of the standard output head of the neural network. If not given, then no contextual head will be used at all. Note that the contextual head is implemented as a

ContextualPointLayer.- –

multihead Let \(\mathcal{L}^{(1)}\) be the loss function from the standard output head and \(\mathcal{L}^{(2)}\) the loss function from the contextual head output. If the architecture has a single head (i.e., multihead set to false), then the model’s loss function will be \(\mathcal{L} = \mathcal{L}^{(2)}\). However, if the architecture is multiheaded (i.e., multihead set to true), then the model’s loss function will be \(\mathcal{L} = \mathcal{L}^{(1)} + \mathcal{L}^{(2)}\) .

- –

max_depth The number of contextual point layers in the contextual head.

- –

hidden_channels A list with the dimensionality of the hidden feature space for each contextual point layer.

- –

output_channels A list with the dimensionality of the output feature space for each contextual point layer.

- –

bn A list governing whether to include batch normalization at each contextual point layer.

- –

bn_momentum A list with the momentum for the batch normalization of each contextual point layer such that

new_mean = old_mean * momentum + batch_mean * (1 - momentum). See the Keras documentation on batch normalization for more details.- –

bn_along_neighbors A list governing whether to apply the batch normalization to the neighbors instead of the features, when possible.

- –

activation A list with the activation function for each contextual point layer. See the keras documentation on activations for more details.

- –

distance A list with the distance that must be used at each contextual point layer. Supported values are

"euclidean"and"squared".- –

ascending_order Whether to force distance-based ascending order of the neighborhoods (

true) or not (false).- –

aggregation A list with the aggregation strategy for each contextual point layer, either

"max"or"mean".- –

initializer A list with the initializer for the matrices and vectors of weights. See Keras documentation on layer initializers for further details.

- –

regularizer A list with the regularizer for the matrices and vectors of weights. See the keras documentation on regularizers for more details.

- –

constraint A list with the constraint for the matrices and vectors of weights. See the keras documentation on constraints for more details.

- –

- –

output_kernel_initializer The initialization method for the final 1D convolution that computes the point-wise outputs of the neural network. See the keras documentation on initializers for more details.

- –

model_handling Define how to handle the model, i.e., not the architecture itself but how it must be used. See the description of PointNet model handling for more details.

- –

compilation_args The arguments governing the model’s compilation. They include the optimizer, the loss function and the metrics to be monitored during training. See the optimizers section and losses section for further details.

- –

training_evaluation_metrics - –

training_class_evaluation_metrics - –

training_evaluation_report_path - –

training_class_evaluation_report_path - –

training_confusion_matrix_report_path - –

training_confusion_matrix_report_plot - –

training_class_distribution_report_path - –

training_classified_point_cloud_path - –

training_activations_path

- –

Hierarchical feature extraction with KPConv

The ConvAutoencPwiseClassif architecture can be configured with

Kernel Point Convolution (KPConv) for feature extraction operations. The

downsampling strategy can be defined through the

FeaturesDownsamplingLayer or the StridedKPConvLayer,

the upsampling strateg through the FeaturesUpsamplingLayer, and

the feature extraction through the KPConvLayer. The JSON below

illustrates how to configure KPConv-based hierarchical feature extractor using

the VL3D framework. For further details on the original KPConv architecture,

readers are referred to

the KPConv paper (Thomas et al., 2019)

.

{

"in_pcloud": [

"/mnt/netapp2/Store_uscciaep/lidar_data/hessigheim/vl3d/mined/Mar18_train_hsv_std.laz"

],

"out_pcloud": [

"/mnt/netapp2/Store_uscciaep/lidar_data/hessigheim/vl3d/kpconv_R/T1/*"

],

"sequential_pipeline": [

{

"train": "ConvolutionalAutoencoderPwiseClassifier",

"training_type": "base",

"fnames": ["Reflectance", "ones"],

"random_seed": null,

"model_args": {

"fnames": ["Reflectance", "ones"],

"num_classes": 11,

"class_names": ["LowVeg", "ImpSurf", "Vehicle", "UrbanFurni", "Roof", "Facade", "Shrub", "Tree", "Soil/Gravel", "VertSurf", "Chimney"],

"pre_processing": {

"pre_processor": "hierarchical_fps",

"support_strategy_num_points": 60000,

"to_unit_sphere": false,

"support_strategy": "fps",

"support_chunk_size": 2000,

"support_strategy_fast": true,

"center_on_pcloud": true,

"neighborhood": {

"type": "sphere",

"radius": 3.0,

"separation_factor": 0.8

},

"num_points_per_depth": [512, 256, 128, 64, 32],

"fast_flag_per_depth": [false, false, false, false, false],

"num_downsampling_neighbors": [1, 16, 8, 8, 4],

"num_pwise_neighbors": [32, 16, 16, 8, 4],

"num_upsampling_neighbors": [1, 16, 8, 8, 4],

"nthreads": 12,

"training_receptive_fields_distribution_report_path": "*/training_eval/training_receptive_fields_distribution.log",

"training_receptive_fields_distribution_plot_path": "*/training_eval/training_receptive_fields_distribution.svg",

"training_receptive_fields_dir": null,

"receptive_fields_distribution_report_path": "*/training_eval/receptive_fields_distribution.log",

"receptive_fields_distribution_plot_path": "*/training_eval/receptive_fields_distribution.svg",

"receptive_fields_dir": null,

"training_support_points_report_path": "*/training_eval/training_support_points.las",

"support_points_report_path": "*/training_eval/support_points.las"

},

"feature_extraction": {

"type": "KPConv",

"operations_per_depth": [2, 1, 1, 1, 1],

"feature_space_dims": [64, 64, 128, 256, 512, 1024],

"bn": true,

"bn_momentum": 0.0,

"activate": true,

"sigma": [3.0, 3.0, 3.0, 3.0, 3.0, 3.0],

"kernel_radius": [3.0, 3.0, 3.0, 3.0, 3.0, 3.0],

"num_kernel_points": [15, 15, 15, 15, 15, 15],

"deformable": [false, false, false, false, false, false],

"W_initializer": ["glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform"],

"W_regularizer": [null, null, null, null, null, null],

"W_constraint": [null, null, null, null, null, null],

"unary_convolution_wrapper": {

"activation": "relu",

"initializer": "glorot_uniform",

"bn": true,

"bn_momentum": 0.98,

"feature_dim_divisor": 2

}

},

"structure_alignment": null,

"features_alignment": null,

"downsampling_filter": "strided_kpconv",

"upsampling_filter": "mean",

"upsampling_bn": true,

"upsampling_momentum": 0.0,

"conv1d_kernel_initializer": "glorot_normal",

"output_kernel_initializer": "glorot_normal",

"model_handling": {

"summary_report_path": "*/model_summary.log",

"training_history_dir": "*/training_eval/history",

"kpconv_representation_dir": "*/training_eval/kpconv_layers/",

"skpconv_representation_dir": "*/training_eval/skpconv_layers/",

"class_weight": [1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1],

"training_epochs": 300,

"batch_size": 16,

"checkpoint_path": "*/checkpoint.weights.h5",

"checkpoint_monitor": "loss",

"learning_rate_on_plateau": {

"monitor": "loss",

"mode": "min",

"factor": 0.1,

"patience": 2000,

"cooldown": 5,

"min_delta": 0.01,

"min_lr": 1e-6

}

},

"compilation_args": {

"optimizer": {

"algorithm": "SGD",

"learning_rate": {

"schedule": "exponential_decay",

"schedule_args": {

"initial_learning_rate": 1e-2,

"decay_steps": 15000,

"decay_rate": 0.96,

"staircase": false

}

}

},

"loss": {

"function": "class_weighted_categorical_crossentropy"

},

"metrics": [

"categorical_accuracy"

]

},

"architecture_graph_path": "*/model_graph.png",

"architecture_graph_args": {

"show_shapes": true,

"show_dtype": true,

"show_layer_names": true,

"rankdir": "TB",

"expand_nested": true,

"dpi": 300,

"show_layer_activations": true

}

},

"autoval_metrics": ["OA", "P", "R", "F1", "IoU", "wP", "wR", "wF1", "wIoU", "MCC", "Kappa"],

"training_evaluation_metrics": ["OA", "P", "R", "F1", "IoU", "wP", "wR", "wF1", "wIoU", "MCC", "Kappa"],

"training_class_evaluation_metrics": ["P", "R", "F1", "IoU"],

"training_evaluation_report_path": "*/training_eval/evaluation.log",

"training_class_evaluation_report_path": "*/training_eval/class_evaluation.log",

"training_confusion_matrix_report_path": "*/training_eval/confusion.log",

"training_confusion_matrix_plot_path": "*/training_eval/confusion.svg",

"training_class_distribution_report_path": "*/training_eval/class_distribution.log",

"training_class_distribution_plot_path": "*/training_eval/class_distribution.svg",

"training_classified_point_cloud_path": "*/training_eval/classified_point_cloud.las",

"training_activations_path": null

},

{

"writer": "PredictivePipelineWriter",

"out_pipeline": "*pipe/KPC_T1.pipe",

"include_writer": false,

"include_imputer": false,

"include_feature_transformer": false,

"include_miner": false,

"include_class_transformer": false

}

]

}

The JSON above defines a ConvAutoencPwiseClassif that uses a

hierarchical furthest point sampling strategy with a 3D spherical neighborhood

and the KPConv operator for feature extraction. It is expected to work on a

feature space with a column of ones (for feature-unbiased geometric features)

and another of reflectances.

Arguments

- –

training_type Typically it should be

"base"for neural networks. For further details, read the training strategies section.

- –

fnames The name of the features that must be given as input to the neural network. For hierarchical autoencoders this list can contain

"ones"to specify whether to include a column of ones in the input space matrix. This architecture does not support empty feature spaces as input, thus, when no features are given, the input feature space must be represented with a column of ones. NOTE that, for technical reasons, the feature names should also be given inside themodel_argsdictionary.

- –

random_seed Can be used to specify an integer like seed for any randomness-based computation. Mostly to be used for reproducibility purposes. Note that the initialization of a neural network is often based on random distributions. This parameter does not affect those distributions, so it will not guarantee reproducibility for of deep learning models.

- –

model_args The model specification.

- –

fnames The feature names must be given again inside the

model_argsdictionary due to technical reasons.

- –

num_classess An integer specifying the number of classes involved in the point-wise classification tasks.

- –

class_names The names of the classes involved in the classification task. Each string corresponds to the class associated to its index in the list.

- –

pre_processing How the select and fix stages of the deep learning strategy must be handled. Note that hierarchical autoencoders demand hierarchical receptive fields. See the receptive fields and hierarchical FPS receptive field sections for further details.

- –

feature_extraction The definition of the feature extraction operator. A detailed description of the case when

"type": "KPConv"is given below. For a description of the case when"type": "PointNet"see the PointNet operator documentation.- –

operations_per_depth A list specifying how many operations per depth level. The i-th element of the list gives the number of feature extraction operations at depth i.

- –

feature_space_dims A list specifying the output dimensionality of the feature space after each feature extration operation. The i-th element of the list gives the dimensionality of the i-th feature extraction operation.

- –

bn Boolean flag to decide whether to enable batch normalization for feature extraction.

- –

bn_momentum Momentum for the moving average of the batch normalization, such that

new_mean = old_mean * momentum + batch_mean * (1 - momentum). See the Keras documentation on batch normalization for more details.

- –

activate Trueto activate the output of the KPConv,Falseotherwise.

- –

sigma The influence distance of the kernel points for each KPConv.

- –

kernel_radius The radius of the ball where the kernel points belong for each KPConv.

- –

num_kernel_points The number of points (i.e., structure space dimensionality) for each KPConv kernel.

- –

deformable Whether the structure space of the KPConv will be optimized (

True) or not (False), for each KPConv.- –

W_initializer The initialization method for the weights of each KPConv. See the keras documentation on initializers for more details.

- –

W_regularizer The regularization strategy for weights of each KPConv. See the keras documentation on regularizers for more details.

- –

W_constraint The constraints of the weights of each KPConv. See the keras documentation on constraints for more details.

- –

unary_convolution_wrapper The specification of the unary convolutions (aka SharedMLPs) to be applied before the KPConv layer to half the feature dimensionality and also after to restore it.

- –

activation The activation function for each unary convolution / SharedMLP. See the keras documentation on activations for more details.

- –

activate_postwrap Whether to include an activation function after the unary convolution (after the batch normalization, if any).

- –

initializer The initialization method for the point-wise unary convolutions (SharedMLPs). See the keras documentation on initializers for more details.

- –

bn Whether to enable batch normalization (

True) or not (False).- –

bn_momentum Momentum for the moving average of the batch normalization, such that

new_mean = old_mean * momentum + batch_mean * (1 - momentum). See the Keras documentation on batch normalization for more details.- –

postwrap_bn Whether to include a batch normalization layer after the unary convolution.

- –

feature_dim_divisor The divisor for the dimensionality in the unary convolution wrapper. The number of features will be divided by this number. The default is \(2\).

- –

- –

- –

structure_alignment When given, this specification will govern the alignment of the structure space.

- –

tnet_pre_filters_spec List defining the number of pre-transformation filters at each depth.

- –

tnet_post_filters_spec List defining the number of post-transformation filters at each depth.

- –

kernel_initializer The kernel initialization method for the structure alignment layers. See the keras documentation on initializers for more details.

- –

- –

features_alignment When given, this specification will govern the alignment of the feature space. It is like the

structure_alignmentdictionary but it is applied to the features instead of the structure space.- –

downsampling_filter The type of downsampling filter. See

StridedKPConvLayer,FeaturesDownsamplingLayer, andInterdimensionalPointTransformerLayerfor more details.- –

upsampling_filter The type of upsampling filter. See

FeaturesUpsamplingLayerandInterdimensionalPointTransformerLayerfor more details.

- –

upsampling_bn Boolean flag to decide whether to enable batch normalization for upsampling transformations.

- –

upsampling_momentum Momentum for the moving average of the upsampling batch normalization, such that

new_mean = old_mean * momentum + batch_mean * (1-momentum). See the Keras documentation on batch normalization for more details.

- –

conv1d_kernel_initializer The initialization method for the 1D convolutions during upsampling. See the keras documentation on initializers for more details.

- –

output_kernel_initializer The initialization method for the final 1D convolution that computes the point-wise outputs of the neural network. See the keras documentation on initializers for more details.

- –

model_handling Define how to handle the model, i.e., not the architecture itself but how it must be used. See the description of PointNet model handling for more details. The main difference for hierarchical autoencoders using KPConv are:

- –

kpconv_representation_dir Path where the plots and CSV data representing the KPConv kernels will be stored.

- –

skpconv_representation_dir Path where the plots and CSV data representing the strided KPConv kernels will be stored.

- –

- –

compilation_args The arguments governing the model’s compilation. They include the optimizer, the loss function and the metrics to be monitored during training. See the optimizers section and losses section for further details.

- –

training_evaluation_metrics - –

training_class_evaluation_metrics - –

training_evaluation_report_path - –

training_class_evaluation_report_path - –

training_confusion_matrix_report_path - –

training_confusion_matrix_report_plot - –

training_class_distribution_report_path - –

training_classified_point_cloud_path - –

training_activations_path

- –

Hierarchical feature extraction with SFL-NET

The ConvAutoencPwiseClassif architecture can be configured as a

Slight Filter Learning Network (SFL-NET). This neural network for 3D point

clouds was introduced in

the SFL-NET paper (Li et al., 2023)

. It uses a simplified version of KPConv and changes the shared MLPs by

hourglasses in the upsampling and final layers. On top of that, it uses the

hourglass layer to define a residual hourglass block that wraps each

feature extraction layer at the different depths of the encoding hierarchy.

The JSON below illustrates how to configure a SFL-NET-like hierarchical feature

extractor using the VL3D framework.

{

"in_pcloud": [

"/oldext4/lidar_data/vl3dhack/data/dales/train/5080_54435.laz"

],

"out_pcloud": [

"/oldext4/lidar_data/vl3dhack/multiclass/out/DL_SFLNET/T1/*"

],

"sequential_pipeline": [

{

"class_transformer": "ClassReducer",

"on_predictions": false,

"input_class_names": ["noclass", "ground", "vegetation", "cars", "trucks", "powerlines", "fences", "poles", "buildings"],

"output_class_names": ["ground", "vegetation", "buildings", "powerlines", "objects", "noclass"],

"class_groups": [["ground"], ["vegetation"], ["buildings"], ["powerlines"], ["cars", "trucks", "fences", "poles"], ["noclass"]],

"report_path": "*class_reduction.log",

"plot_path": "*class_reduction.svg"

},

{

"train": "ConvolutionalAutoencoderPwiseClassifier",

"training_type": "base",

"fnames": ["ones"],

"random_seed": null,

"model_args": {

"fnames": ["ones"],

"num_classes": 6,

"class_names": ["ground", "vegetation", "buildings", "powerlines", "objects", "noclass"],

"pre_processing": {

"pre_processor": "hierarchical_fps",

"support_strategy_num_points": 200000,

"to_unit_sphere": false,

"support_strategy": "fps",

"support_chunk_size": 10000,

"support_strategy_fast": true,

"receptive_field_oversampling": {

"min_points": 2,

"strategy": "nearest",

"k": 3,

"radius": 0.5

},

"center_on_pcloud": true,

"neighborhood": {

"type": "sphere",

"radius": 6.0,

"separation_factor": 0.8

},

"num_points_per_depth": [256, 128, 64, 32, 16],

"fast_flag_per_depth": [false, false, false, false, false],

"num_downsampling_neighbors": [1, 16, 16, 16, 16],

"num_pwise_neighbors": [16, 16, 16, 16, 16],

"num_upsampling_neighbors": [1, 16, 16, 16, 16],

"nthreads": -1,

"training_receptive_fields_distribution_report_path": "*/training_eval/training_receptive_fields_distribution.log",

"training_receptive_fields_distribution_plot_path": "*/training_eval/training_receptive_fields_distribution.svg",

"training_receptive_fields_dir": "*/training_eval/training_rf/",

"receptive_fields_distribution_report_path": "*/training_eval/receptive_fields_distribution.log",

"receptive_fields_distribution_plot_path": "*/training_eval/receptive_fields_distribution.svg",

"_receptive_fields_dir": "*/training_eval/receptive_fields/",

"training_support_points_report_path": "*/training_eval/training_support_points.las",

"support_points_report_path": "*/training_eval/support_points.las"

},

"feature_extraction": {

"type": "LightKPConv",

"operations_per_depth": [2, 1, 1, 1, 1],

"feature_space_dims": [64, 64, 128, 256, 512, 1024],

"bn": true,

"bn_momentum": 0.98,

"activate": true,

"sigma": [6.0, 6.0, 7.5, 9.0, 10.5, 12.0],

"kernel_radius": [6.0, 6.0, 6.0, 6.0, 6.0, 6.0],

"num_kernel_points": [15, 15, 15, 15, 15, 15],

"deformable": [false, false, false, false, false, false],

"W_initializer": ["glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform"],

"W_regularizer": [null, null, null, null, null, null],

"W_constraint": [null, null, null, null, null, null],

"A_trainable": [true, true, true, true, true ,true],

"A_regularizer": [null, null, null, null, null, null],

"A_constraint": [null, null, null, null, null, null],

"A_initializer": ["ones", "ones", "ones", "ones", "ones", "ones"],

"unary_convolution_wrapper": null,

"hourglass_wrapper": {

"internal_dim": [2, 2, 4, 16, 32, 64],

"parallel_internal_dim": [8, 8, 16, 32, 64, 128],

"activation": ["relu", "relu", "relu", "relu", "relu", "relu"],

"activation2": [null, null, null, null, null, null],

"regularize": [true, true, true, true, true, true],

"W1_initializer": ["glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform"],

"W1_regularizer": [null, null, null, null, null, null],

"W1_constraint": [null, null, null, null, null, null],

"W2_initializer": ["glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform"],

"W2_regularizer": [null, null, null, null, null, null],

"W2_constraint": [null, null, null, null, null, null],

"loss_factor": 0.1,

"subspace_factor": 0.125,

"feature_dim_divisor": 4,

"bn": false,

"bn_momentum": 0.98,

"out_bn": true,

"out_bn_momentum": 0.98,

"out_activation": "relu"

}

},

"features_alignment": null,

"downsampling_filter": "strided_lightkpconv",

"upsampling_filter": "mean",

"upsampling_bn": true,

"upsampling_momentum": 0.98,

"upsampling_hourglass": {

"activation": "relu",

"activation2": null,

"regularize": true,

"W1_initializer": "glorot_uniform",

"W1_regularizer": null,

"W1_constraint": null,

"W2_initializer": "glorot_uniform",

"W2_regularizer": null,

"W2_constraint": null,

"loss_factor": 0.1,

"subspace_factor": 0.125

},

"conv1d": false,

"conv1d_kernel_initializer": "glorot_normal",

"output_kernel_initializer": "glorot_normal",

"model_handling": {

"summary_report_path": "*/model_summary.log",

"training_history_dir": "*/training_eval/history",

"kpconv_representation_dir": "*/training_eval/kpconv_layers/",

"skpconv_representation_dir": "*/training_eval/skpconv_layers/",

"lkpconv_representation_dir": "*/training_eval/lkpconv_layers/",

"slkpconv_representation_dir": "*/training_eval/slkpconv_layers/",

"class_weight": [1.0, 1.0, 1.0, 1.0, 1.0, 0.0],

"training_epochs": 300,

"batch_size": 64,

"training_sequencer": {

"type": "DLSequencer",

"random_shuffle_indices": true,

"augmentor": {

"transformations": [

{

"type": "Rotation",

"axis": [0, 0, 1],

"angle_distribution": {

"type": "uniform",

"start": -3.141592,

"end": 3.141592

}

},

{

"type": "Scale",

"scale_distribution": {

"type": "uniform",

"start": 0.99,

"end": 1.01

}

},

{

"type": "Jitter",

"noise_distribution": {

"type": "normal",

"mean": 0,

"stdev": 0.001

}

}

]

}

},

"prediction_reducer": {

"reduce_strategy" : {

"type": "MeanPredReduceStrategy"

},

"select_strategy": {

"type": "ArgMaxPredSelectStrategy"

}

},

"checkpoint_path": "*/checkpoint.weights.h5",

"checkpoint_monitor": "loss",

"learning_rate_on_plateau": {

"monitor": "loss",

"mode": "min",

"factor": 0.1,

"patience": 2000,

"cooldown": 5,

"min_delta": 0.01,

"min_lr": 1e-6

}

},

"compilation_args": {

"optimizer": {

"algorithm": "Adam",

"learning_rate": {

"schedule": "exponential_decay",

"schedule_args": {

"initial_learning_rate": 1e-2,

"decay_steps": 9000,

"decay_rate": 0.96,

"staircase": false

}

}

},

"loss": {

"function": "class_weighted_categorical_crossentropy"

},

"metrics": [

"categorical_accuracy"

]

},

"architecture_graph_path": "*/model_graph.png",

"architecture_graph_args": {

"show_shapes": true,

"show_dtype": true,

"show_layer_names": true,

"rankdir": "TB",

"expand_nested": true,

"dpi": 300,

"show_layer_activations": true

}

},

"autoval_metrics": ["OA", "P", "R", "F1", "IoU", "wP", "wR", "wF1", "wIoU", "MCC", "Kappa"],

"training_evaluation_metrics": ["OA", "P", "R", "F1", "IoU", "wP", "wR", "wF1", "wIoU", "MCC", "Kappa"],

"training_class_evaluation_metrics": ["P", "R", "F1", "IoU"],

"training_evaluation_report_path": "*/training_eval/evaluation.log",

"training_class_evaluation_report_path": "*/training_eval/class_evaluation.log",

"training_confusion_matrix_report_path": "*/training_eval/confusion.log",

"training_confusion_matrix_plot_path": "*/training_eval/confusion.svg",

"training_class_distribution_report_path": "*/training_eval/class_distribution.log",

"training_class_distribution_plot_path": "*/training_eval/class_distribution.svg",

"training_classified_point_cloud_path": "*/training_eval/classified_point_cloud.las",

"training_activations_path": null

},

{

"writer": "PredictivePipelineWriter",

"out_pipeline": "*/model/SFLNET.pipe",

"include_writer": false,

"include_imputer": true,

"include_feature_transformer": true,

"include_miner": true,

"include_class_transformer": false,

"include_clustering": false,

"ignore_predictions": false

}

]

}

The JSON above defines a ConvAutoencPwiseClassif that uses a

hierarchical furthest point sampling strategy with a 3D spherical neighborhood

to prepare the input for a SFL-NET model. The subspace and loss factors are

configured to \(\alpha=1/8\) and \(\beta=1/10\), as recommended in

the SFL-NET paper (Li et al., 2023)

.

Arguments

- –

training_type Typically it should be

"base"for neural networks. For further details, read the training strategies section.- –

fnames - –

random_seed - –

model_args The model specification.

- –

fnames - –

num_classes - –

class_names - –

pre_processing - –

feature_extraction The definition of the feature extraction operator. A detailed description of the case when

"type": "LightKPConv"and all the shared MLPs / unary convolutions are replaced by hourglass layers and hourglass residual blocks is given below. For a description of the case when"type": "KPConv"see the KPConv operator documentation. For a description of the general case"type": "LightKPConv"see the LightKPConv operator documentation .- –

operations_per_depth - –

feature_space_dims - –

bn - –

bn_momentum - –

activate - –

sigma - –

kernel_radius - –

num_kernel_points - –

deformable - –

W_initializer The initialization method for the weights of each light KPConv. See the keras documentation on initializers for more details.

- –

W_regularizer The regularization strategy for the weights of each light KPConv. See the keras documentation on regularizers for more details.

- –

W_constraint The constraints of the weights of each light KPConv. See the keras documentation on constraints for more details.

- –

unary_convolution_wrapper To mimic a SFL-NET this specification must be set to null because SFL-NET uses a residual hourglass block instead of shared MLPs.

- –

hourglass_wrapper The specification of how to use hourglass layers to wrap the feature extraction layers. To mimic a SFL-NET it is necessary to use an hourglass wrapper and avoid unary convolutions at all.

- –

internal_dim A list with the internal dimensions for the first transform in a

HourglassLayer. NOTE that this value is ignored when a subspace factor \(\alpha\) is given.- –

parallel_internal_dim A list with the internal dimensions for the

HourglassLayerin the residual block. NOTE that this value is ignored when a subspace factor \(\alpha\) is given.- –

activation The first activation function (i.e., \(\sigma_1\)) for each

HourglassLayer. See the keras documentation on activations for more details.- –

activation2 The second activation function (i.e., \(\sigma_2\)) for each

HourglassLayer. See the keras documentation on activations for more details.- –

activate_postwrap Whether to include an activation function to finish the wrapping of the feature extractor operator.

- –

activate_residual Whether to include an activation function to finish the residual block. Note that the standard practice is to avoid activation functions at the end of residual feature extraction blocks to keep them linear.

- –

regularize Whether to regularize each

HourglassLayerby adding \(\beta + \mathcal{L}_h\) to the loss function (True) or not (False).- –

spectral_strategy What strategy use to compute the spectral norm. It can be either “unsafe” (fast but might break during training), “safe” (will work during training but can be twice slower), or “approx” (as fast as unsafe but computing the approximated norm after applying a small tikhonov regularization to prevent numerical issues, DEFAULT).

- –

W1_initializer The initialization method for the first matrix of weights for each

HourglassLayer. See the keras documentation on initializers for more details.- –

W1_regularizer The regularization strategy for the first matrix of weights for each

HourglassLayer. See the keras documentation on regularizers for more details.- –

W1_constraint The constraint of the first matrix of weights for each

HourglassLayer. See the keras documentation on constraints for more details.- –

W2_initializer The initialization method for the second matrix of weights for each

HourglassLayer. See the keras documentation on initializers for more details.- –

W2_regularizer The regularization strategy for the second matrix of weights for each

HourglassLayer. See the keras documentation on regularizers for more details.- –

W2_constraint The constraint of the second matrix of weights for each

HourglassLayer. See the keras documentation on constraints for more details.- –

loss_factor The loss factor \(\beta\) for any

HourglassLayer. It governs the impact of the extra term \(\beta \mathcal{L}_h\) in the loss function. NOTE that the loss factor will only be considered when regularize is set toTrue.- –

subspace_factor The subspace factor \(\alpha\) for any

HourglassLayer. When given, the internal dimensionality \(D_h\) will be:\[D_h = \alpha \; \max \; \left\{D_{\mathrm{in}}, D_{\mathrm{out}}\right\}\]NOTE that when given, any specification of the internal dimensionalities will be replaced by the values derived by applying the subspace factor.

- –

feature_dim_divisor The divisor to determine the output dimensionality of the pre-wrapper hourglass layer. The dimensionality will be calculated as \(D_{\text{in}} / \text{feature_dim_divisor}\).

- –

bn Whether to include batch normalization to the main branch before merging with the residual block.

- –

bn_momentum The momentum for the moving average of the batch normalization (as explained for PointNet++ bn_momentum specification ).

- –

out_bn Whether to include a batch normalization layer after the linear superposition of the residual block with the main branch (

true) or not (false).- –

merge_bn Alias for

out_bn. Note that if both are specified,out_bnhas preference overmerge_bn.- –

out_bn_momentum The momentum for the moving average of the batch normalization after the linear superposition of the residual block with the main branch (as explained for PointNet++ bn_momentum specification ).

- –

out_activation Whether to include an activation layer after the linear superposition (and after the batch normalization, if any) of the residual block with the main branch (

true) or not (false).

- –

- –

- –

features_alignment It must be null to mimic a SFL-NET model. See KPConv arguments documentation for further details.

- –

downsampling_filter It must be configured to

"strided_lightkpconv"(seeStridedLightKPConvLayer) to mimic a SFL-NET model.- –

upsampling_filter The original upsampling strategy for KPConv and derived architectures is

"nearest"(i.e., nearest upsampling). However, in VL3D++ examples we often use"mean"for our baseline models because we found it yields better results. SeeFeaturesUpsamplingLayerandInterdimensionalPointTransformerLayerfor more details.- –

upsampling_bn - –

upsampling_momentum - –

conv1d Boolean flag governing whether to use unary convolutions (shared MLPs) to wrap the hourglass or not. SFL-NET models use hourglass layers instead of shared MLPs so it must be set to

Falsewhen mimicking this model.- –

conv1d_kernel_initializer - –

output_kernel_initializer - –

model_handling See KPConv arguments documentation and LightKPConv arguments documentation .

- –

compilation_args - –

training_evaluation_metrics - –

training_class_evaluation_metrics - –

training_evaluation_report_path - –

training_class_evaluation_report_path - –

training_confusion_matrix_report_path - –

training_confusion_matrix_report_plot - –

training_class_distribution_report_path - –

training_classified_point_cloud_path - –

training_activations_path

- –

Hierarchical feature extraction with LightKPConv

The ConvAutoencPwiseClassif architecture can be configured using a

light-weight version of the KPConvLayer that for \(K\) kernel

points uses only two matrices: 1) the weights

\(\pmb{W} \in \mathbb{R}^{D_{\mathrm{in}} \times D_{\mathrm{out}}}\) and

2) the scale factors

\(\pmb{A} \in \mathbb{R}^{m_q \times D_{\mathrm{in}}}\). Furthe details

can be seen in the LightKPConvLayer documentation. The main

difference with the classical KPConvLayer consists in updating

the original equation:

to the light-weight version:

Note that, when all the shared MLPs are replaced by hourglass blocks, the

LightKPConvLayer can be used in the context of a

ConvAutoencPwiseClassif model to mimic the SFL-NET model as described

in the

hierarchical feature extraction with SFL-NET section

. The rest of this section is devoted to describe the general usage of the

LightKPConvLayer. The JSON bellow illustrates how to configure

LightKPConv-based hierarchical feature extractors using the VL3D framework.

{

"in_pcloud": [

"/oldext4/lidar_data/vl3dhack/data/dales/train/5080_54435.laz"

],

"out_pcloud": [

"/oldext4/lidar_data/vl3dhack/multiclass/out/DL_LKPC/T1/*"

],

"sequential_pipeline": [

{

"class_transformer": "ClassReducer",

"on_predictions": false,

"input_class_names": ["noclass", "ground", "vegetation", "cars", "trucks", "powerlines", "fences", "poles", "buildings"],

"output_class_names": ["ground", "vegetation", "buildings", "powerlines", "objects", "noclass"],

"class_groups": [["ground"], ["vegetation"], ["buildings"], ["powerlines"], ["cars", "trucks", "fences", "poles"], ["noclass"]],

"report_path": "*class_reduction.log",

"plot_path": "*class_reduction.svg"

},

{

"train": "ConvolutionalAutoencoderPwiseClassifier",

"training_type": "base",

"fnames": ["ones"],

"random_seed": null,

"model_args": {

"fnames": ["ones"],

"num_classes": 6,

"class_names": ["ground", "vegetation", "buildings", "powerlines", "objects", "noclass"],

"pre_processing": {

"pre_processor": "hierarchical_fps",

"support_strategy_num_points": 200000,

"to_unit_sphere": false,

"support_strategy": "fps",

"support_chunk_size": 10000,

"support_strategy_fast": true,

"receptive_field_oversampling": {

"min_points": 2,

"strategy": "nearest",

"k": 3,

"radius": 0.5

},

"center_on_pcloud": true,

"neighborhood": {

"type": "sphere",

"radius": 6.0,

"separation_factor": 0.8

},

"num_points_per_depth": [256, 128, 64, 32, 16],

"fast_flag_per_depth": [false, false, false, false, false],

"num_downsampling_neighbors": [1, 16, 16, 16, 16],

"num_pwise_neighbors": [16, 16, 16, 16, 16],

"num_upsampling_neighbors": [1, 16, 16, 16, 16],

"nthreads": -1,

"training_receptive_fields_distribution_report_path": "*/training_eval/training_receptive_fields_distribution.log",

"training_receptive_fields_distribution_plot_path": "*/training_eval/training_receptive_fields_distribution.svg",

"training_receptive_fields_dir": "*/training_eval/training_rf/",

"receptive_fields_distribution_report_path": "*/training_eval/receptive_fields_distribution.log",

"receptive_fields_distribution_plot_path": "*/training_eval/receptive_fields_distribution.svg",

"_receptive_fields_dir": "*/training_eval/receptive_fields/",

"training_support_points_report_path": "*/training_eval/training_support_points.las",

"support_points_report_path": "*/training_eval/support_points.las"

},

"feature_extraction": {

"type": "LightKPConv",

"operations_per_depth": [2, 1, 1, 1, 1],

"feature_space_dims": [64, 64, 128, 256, 512, 1024],

"bn": true,

"bn_momentum": 0.98,

"activate": true,

"sigma": [6.0, 6.0, 7.5, 9.0, 10.5, 12.0],

"kernel_radius": [6.0, 6.0, 6.0, 6.0, 6.0, 6.0],

"num_kernel_points": [15, 15, 15, 15, 15, 15],

"deformable": [false, false, false, false, false, false],

"W_initializer": ["glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform"],

"W_regularizer": [null, null, null, null, null, null],

"W_constraint": [null, null, null, null, null, null],

"A_trainable": [true, true, true, true, true ,true],

"A_regularizer": [null, null, null, null, null, null],

"A_constraint": [null, null, null, null, null, null],

"A_initializer": ["ones", "ones", "ones", "ones", "ones", "ones"],

"_unary_convolution_wrapper": {

"activation": "relu",

"initializer": "glorot_uniform",

"bn": true,

"bn_momentum": 0.98,

"feature_dim_divisor": 2

},

"hourglass_wrapper": {

"internal_dim": [2, 2, 4, 16, 32, 64],

"parallel_internal_dim": [8, 8, 16, 32, 64, 128],

"activation": ["relu", "relu", "relu", "relu", "relu", "relu"],

"activation2": [null, null, null, null, null, null],

"regularize": [true, true, true, true, true, true],

"W1_initializer": ["glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform"],

"W1_regularizer": [null, null, null, null, null, null],

"W1_constraint": [null, null, null, null, null, null],

"W2_initializer": ["glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform"],

"W2_regularizer": [null, null, null, null, null, null],

"W2_constraint": [null, null, null, null, null, null],

"loss_factor": 0.1,

"subspace_factor": 0.125,

"feature_dim_divisor": 4,

"bn": false,

"bn_momentum": 0.98,

"out_bn": true,

"out_bn_momentum": 0.98,

"out_activation": "relu"

}

},

"features_alignment": null,

"downsampling_filter": "strided_lightkpconv",

"upsampling_filter": "mean",

"upsampling_bn": true,

"upsampling_momentum": 0.98,

"_upsampling_hourglass": {

"activation": "relu",

"activation2": null,