SFLNet-like model for point-wise segmentation of jaw teeth

Data

The point clouds used in this example are derived from the meshes in the 3D GitHub page or the OSF download page. To transform the data the following Python script is used:

#!/bin/python

# ------------------------------------------------------------------------- #

# AUTHOR: Alberto M. Esmoris Pena #

# BRIEF: Script to assist to_laz.sh in parsing the JSON with references #

# Must NOT be called directly, only through to_laz.sh #

# ------------------------------------------------------------------------- #

import numpy as np

import json

import sys

if __name__ == '__main__':

with open(sys.argv[1], 'r') as jsonf:

JSON = json.load(jsonf)

y = np.array(JSON['labels'])

for yi in y:

print(yi)

The python script is then used inside a bash script that also calls the LASTools.

#!/bin/bash

# --------------------------------------------------------------------- #

# AUTHOR: Alberto M. Esmoris Pena #

# BRIEF: Script to parse the Teeth3DS dataset and derive LAZ files. #

# Must be called from Teeth3DS/data directory. #

# --------------------------------------------------------------------- #

# Path to ZIP file

TXT2LAS=txt2las64

# Function to convert (x, y, z, class) samples to LAZ format

function sample_to_laz {

sample=$1

xyz=$(ls ${sample} | grep -i "\.obj" | sed 's/\.obj/\.xyz/g')

laz=$(sed 's/\.xyz/\.laz/g' <<< ${xyz})

paste -d ',' \

<(grep 'v' ${sample}/*.obj | awk '{print($2","$3","$4)}') \

<(python to_laz.py ${sample}/*.json) \

> ${sample}/${xyz}

${TXT2LAS} -i ${sample}/${xyz} -set_version 1.4 -set_point_type 6 -iparse xyzc -o ${sample}/${laz}

}

# Convert samples in lower mouth

for sample in $(ls lower); do

sample_to_laz lower/${sample}

done

# Convert samples in upper mouth

for sample in $(ls upper); do

sample_to_laz upper/${sample}

done

This example will work with the data in the lower folder, i.e., jaw teeth. More concretely, a training point cloud is composed by merging the following point clouds using the CloudCompare software: 0EJBIPTC, 0DNK2I7H, 0EAKT1CU, 0NH6X4SS, ZOUIF2W4, 0AAQ6BO3, R7SB5B5N, OSZV3Q38, L9EKTZMV, FJS5HCDU, EJWZZZRF, C4LOTSKE, 0IU0UV8E, R8YTI9HB, L428SD7J, IBG3DGZJ, KAHYFGOY, 80RPZWJT, C3TQ47Z0, 01J4R99K, OS06596E, 0JN50XQR, I9TWNSD1, 01MAVT6A, 0OF8OOCX, JJ19KE8W, M4HYU284, PYAY9ZYX, VXRFUE19, Z83V9A9D, GPADPK3N, HM1A4QZR, 6X2UD6H6, 013TGCFK, 017FADFV, SL5I9AXM, 014JV25R, AKHIE0CJ, 14M656LK, P744BHYG, 20AHRBL3, X8I1PX6F, ZGH1UT1Q, R3UKC00Q, H5EFRXCQ, 0OTKQ5J9. The resulting training point cloud looks as shown in the image below:

Visualization of the training point cloud. Each class is represented by a different color. There are as many classes as expected teeth plus one representing the rest of the oral cavity.

The validation of the model was computed on the following point clouds (that were not used to train the model): C6C00RHE, CVTHSBS5, 016A053T, 013NXP1H, 7IKF2TIW, 8WZSZBYG, 013JX8W4, R544MS3L, YTMRIXFD, YV4OEIZ5, YCJO4386, VD3KNUMV, 019PEUMN, 51MXL2ZA, 55EXF0WK, 58M9IXQ2, API3O9JV, ZM8PCSK6, XNEIPJH8, X9OQZ131, VR3C4L0M, UJPJ175B, SAIQAN8Y, V9CAFAV4, V68KILV2, S5VIQ478, Y9WQHQMT, XKTTBEE0, SZQ66Y5A, 01E84NTX, 01ADUNMV, 6BWQC0CT

JSON

Training JSON

The JSON below was used to train the model:

{

"in_pcloud": [

"/ext4/medical_data/Teeth3DS/data/lower/training010.laz"

],

"out_pcloud": [

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/*"

],

"sequential_pipeline": [

{

"class_transformer": "ClassReducer",

"on_predictions": false,

"input_class_names": [

"00", "01", "02", "03", "04", "05", "06", "07", "08", "09",

"10", "11", "12", "13", "14", "15", "16", "17", "18", "19",

"20", "21", "22", "23", "24", "25", "26", "27", "28", "29",

"30", "31", "32", "33", "34", "35", "36", "37", "38", "39",

"40", "41", "42", "43", "44", "45", "46", "47", "48", "49"

],

"output_class_names": [

"mouth",

"jaw31", "jaw32", "jaw33", "jaw34", "jaw35", "jaw36", "jaw37", "jaw38",

"jaw41", "jaw42", "jaw43", "jaw44", "jaw45", "jaw46", "jaw47", "jaw48"

],

"class_groups": [

["00"],

["31"], ["32"], ["33"], ["34"], ["35"], ["36"], ["37"], ["38"],

["41"], ["42"], ["43"], ["44"], ["45"], ["46"], ["47"], ["48"]

],

"report_path": "*training_class_reduction.log",

"plot_path": "*training_class_reduction.svg"

},

{

"train": "ConvolutionalAutoencoderPwiseClassifier",

"training_type": "base",

"fnames": ["ones"],

"random_seed": null,

"model_args": {

"fnames": ["ones"],

"num_classes": 17,

"class_names": [

"mouth",

"jaw31", "jaw32", "jaw33", "jaw34", "jaw35", "jaw36", "jaw37", "jaw38",

"jaw41", "jaw42", "jaw43", "jaw44", "jaw45", "jaw46", "jaw47", "jaw48"

],

"pre_processing": {

"pre_processor": "hierarchical_fpspp",

"support_strategy_num_points": 1024,

"to_unit_sphere": false,

"support_strategy": "fps",

"support_chunk_size": 10000,

"support_strategy_fast": false,

"receptive_field_oversampling": {

"min_points": 64,

"strategy": "knn",

"k": 16,

"radius": 0.5

},

"center_on_pcloud": true,

"neighborhood": {

"type": "sphere",

"radius": 64.0,

"separation_factor": 0.8

},

"num_points_per_depth": [16384, 4096, 1024, 256, 64],

"fast_flag_per_depth": [false, false, false, false, false],

"num_downsampling_neighbors": [1, 16, 16, 16, 16],

"num_pwise_neighbors": [16, 16, 16, 16, 16],

"num_upsampling_neighbors": [1, 16, 16, 16, 16],

"nthreads": -1,

"training_receptive_fields_distribution_report_path": "*/training_eval/training_receptive_fields_distribution.log",

"training_receptive_fields_distribution_plot_path": "*/training_eval/training_receptive_fields_distribution.svg",

"training_receptive_fields_dir": null,

"receptive_fields_distribution_report_path": "*/training_eval/receptive_fields_distribution.log",

"receptive_fields_distribution_plot_path": "*/training_eval/receptive_fields_distribution.svg",

"_receptive_fields_dir": "*/training_eval/receptive_fields/",

"training_support_points_report_path": "*/training_eval/training_support_points.las",

"support_points_report_path": "*/training_eval/support_points.las"

},

"feature_extraction": {

"type": "LightKPConv",

"operations_per_depth": [2, 1, 1, 1, 1],

"feature_space_dims": [64, 64, 128, 256, 512, 1024],

"bn": true,

"bn_momentum": 0.98,

"activate": true,

"sigma": [64.0, 64.0, 80.0, 96.0, 112.0, 128.0],

"kernel_radius": [64.0, 64.0, 64.0, 64.0, 64.0, 64.0],

"num_kernel_points": [15, 15, 15, 15, 15, 15],

"deformable": [false, false, false, false, false, false],

"W_initializer": ["glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform"],

"W_regularizer": [null, null, null, null, null, null],

"W_constraint": [null, null, null, null, null, null],

"A_trainable": [true, true, true, true, true ,true],

"A_regularizer": [null, null, null, null, null, null],

"A_constraint": [null, null, null, null, null, null],

"A_initializer": ["ones", "ones", "ones", "ones", "ones", "ones"],

"_unary_convolution_wrapper": {

"activation": "relu",

"initializer": "glorot_uniform",

"bn": true,

"bn_momentum": 0.98,

"feature_dim_divisor": 2

},

"hourglass_wrapper": {

"internal_dim": [2, 2, 4, 16, 32, 64],

"parallel_internal_dim": [8, 8, 16, 32, 64, 128],

"activation": ["relu", "relu", "relu", "relu", "relu", "relu"],

"activation2": [null, null, null, null, null, null],

"regularize": [true, true, true, true, true, true],

"W1_initializer": ["glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform"],

"W1_regularizer": [null, null, null, null, null, null],

"W1_constraint": [null, null, null, null, null, null],

"W2_initializer": ["glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform", "glorot_uniform"],

"W2_regularizer": [null, null, null, null, null, null],

"W2_constraint": [null, null, null, null, null, null],

"loss_factor": 0.1,

"subspace_factor": 0.125,

"feature_dim_divisor": 4,

"bn": false,

"bn_momentum": 0.98,

"out_bn": true,

"out_bn_momentum": 0.98,

"out_activation": "relu"

}

},

"features_alignment": null,

"downsampling_filter": "strided_lightkpconv",

"upsampling_filter": "mean",

"upsampling_bn": true,

"upsampling_momentum": 0.98,

"upsampling_hourglass": {

"activation": "relu",

"activation2": null,

"regularize": true,

"W1_initializer": "glorot_uniform",

"W1_regularizer": null,

"W1_constraint": null,

"W2_initializer": "glorot_uniform",

"W2_regularizer": null,

"W2_constraint": null,

"loss_factor": 0.1,

"subspace_factor": 0.125

},

"conv1d": false,

"conv1d_kernel_initializer": "glorot_normal",

"output_kernel_initializer": "glorot_normal",

"model_handling": {

"summary_report_path": "*/model_summary.log",

"training_history_dir": "*/training_eval/history",

"_features_structuring_representation_dir": "*/training_eval/feat_struct_layer/",

"kpconv_representation_dir": "*/training_eval/kpconv_layers/",

"skpconv_representation_dir": "*/training_eval/skpconv_layers/",

"lkpconv_representation_dir": "*/training_eval/lkpconv_layers/",

"slkpconv_representation_dir": "*/training_eval/slkpconv_layers/",

"class_weight": [

1.0,

1.0, 1.0, 1.0, 1.0, 1.0, 1.0, 1.0, 1.0,

1.0, 1.0, 1.0, 1.0, 1.0, 1.0, 1.0, 1.0

],

"training_epochs": 300,

"batch_size": 2,

"training_sequencer": {

"type": "DLSequencer",

"random_shuffle_indices": true,

"augmentor": {

"transformations": [

{

"type": "Scale",

"scale_distribution": {

"type": "uniform",

"start": 0.98,

"end": 1.02

}

},

{

"type": "Jitter",

"noise_distribution": {

"type": "normal",

"mean": 0,

"stdev": 0.03

}

}

]

}

},

"prediction_reducer": {

"reduce_strategy" : {

"type": "MeanPredReduceStrategy"

},

"select_strategy": {

"type": "ArgMaxPredSelectStrategy"

}

},

"checkpoint_path": "*/checkpoint.weights.h5",

"checkpoint_monitor": "loss",

"learning_rate_on_plateau": {

"monitor": "loss",

"mode": "min",

"factor": 0.1,

"patience": 2000,

"cooldown": 5,

"min_delta": 0.01,

"min_lr": 1e-6

}

},

"compilation_args": {

"optimizer": {

"algorithm": "Adam",

"learning_rate": {

"schedule": "exponential_decay",

"schedule_args": {

"initial_learning_rate": 1e-2,

"decay_steps": 1024,

"decay_rate": 0.96,

"staircase": false

}

}

},

"loss": {

"function": "class_weighted_categorical_crossentropy"

},

"metrics": [

"categorical_accuracy"

]

},

"architecture_graph_path": "*/model_graph.png",

"architecture_graph_args": {

"show_shapes": true,

"show_dtype": true,

"show_layer_names": true,

"rankdir": "TB",

"expand_nested": true,

"dpi": 300,

"show_layer_activations": true

}

},

"autoval_metrics": null,

"training_evaluation_metrics": null,

"training_class_evaluation_metrics": null,

"training_evaluation_report_path": null,

"training_class_evaluation_report_path": null,

"training_confusion_matrix_report_path": null,

"training_confusion_matrix_plot_path": null,

"training_class_distribution_report_path": null,

"training_class_distribution_plot_path": null,

"training_classified_point_cloud_path": null,

"training_activations_path": null

},

{

"writer": "PredictivePipelineWriter",

"out_pipeline": "*/pipe/SFLNET.pipe",

"include_writer": false,

"include_imputer": false,

"include_feature_transformer": false,

"include_miner": false,

"include_class_transformer": false,

"include_clustering": false,

"ignore_predictions": false

}

]

}

Validation JSON

The following JSON was used to validate the model on unseen data:

{

"in_pcloud": [

"/ext4/medical_data/Teeth3DS/data/lower/C6C00RHE/C6C00RHE_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/CVTHSBS5/CVTHSBS5_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/016A053T/016A053T_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/013NXP1H/013NXP1H_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/7IKF2TIW/7IKF2TIW_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/8WZSZBYG/8WZSZBYG_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/013JX8W4/013JX8W4_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/R544MS3L/R544MS3L_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/YTMRIXFD/YTMRIXFD_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/YV4OEIZ5/YV4OEIZ5_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/YCJO4386/YCJO4386_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/VD3KNUMV/VD3KNUMV_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/019PEUMN/019PEUMN_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/51MXL2ZA/51MXL2ZA_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/55EXF0WK/55EXF0WK_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/58M9IXQ2/58M9IXQ2_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/API3O9JV/API3O9JV_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/ZM8PCSK6/ZM8PCSK6_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/XNEIPJH8/XNEIPJH8_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/X9OQZ131/X9OQZ131_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/VR3C4L0M/VR3C4L0M_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/UJPJ175B/UJPJ175B_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/SAIQAN8Y/SAIQAN8Y_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/V9CAFAV4/V9CAFAV4_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/V68KILV2/V68KILV2_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/S5VIQ478/S5VIQ478_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/Y9WQHQMT/Y9WQHQMT_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/XKTTBEE0/XKTTBEE0_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/SZQ66Y5A/SZQ66Y5A_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/01E84NTX/01E84NTX_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/01ADUNMV/01ADUNMV_lower.laz",

"/ext4/medical_data/Teeth3DS/data/lower/6BWQC0CT/6BWQC0CT_lower.laz",

],

"out_pcloud": [

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/C6C00RHE/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/CVTHSBS5/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/016A053T/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/013NXP1H/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/7IKF2TIW/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/8WZSZBYG/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/013JX8W4/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/R544MS3L/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/YTMRIXFD/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/YV4OEIZ5/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/YCJO4386/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/VD3KNUMV/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/019PEUMN/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/51MXL2ZA/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/55EXF0WK/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/58M9IXQ2/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/API3O9JV/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/ZM8PCSK6/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/XNEIPJH8/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/X9OQZ131/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/VR3C4L0M/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/UJPJ175B/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/SAIQAN8Y/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/V9CAFAV4/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/V68KILV2/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/S5VIQ478/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/Y9WQHQMT/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/XKTTBEE0/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/SZQ66Y5A/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/01E84NTX/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/01ADUNMV/*",

"/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/preds/6BWQC0CT/*",

],

"sequential_pipeline": [

{

"class_transformer": "ClassReducer",

"on_predictions": false,

"input_class_names": [

"00", "01", "02", "03", "04", "05", "06", "07", "08", "09",

"10", "11", "12", "13", "14", "15", "16", "17", "18", "19",

"20", "21", "22", "23", "24", "25", "26", "27", "28", "29",

"30", "31", "32", "33", "34", "35", "36", "37", "38", "39",

"40", "41", "42", "43", "44", "45", "46", "47", "48", "49"

],

"output_class_names": [

"mouth",

"jaw31", "jaw32", "jaw33", "jaw34", "jaw35", "jaw36", "jaw37", "jaw38",

"jaw41", "jaw42", "jaw43", "jaw44", "jaw45", "jaw46", "jaw47", "jaw48"

],

"class_groups": [

["00"],

["31"], ["32"], ["33"], ["34"], ["35"], ["36"], ["37"], ["38"],

["41"], ["42"], ["43"], ["44"], ["45"], ["46"], ["47"], ["48"]

],

"report_path": "*training_class_reduction.log",

"plot_path": "*training_class_reduction.svg"

},

{

"predict": "PredictivePipeline",

"model_path": "/ext4/medical_data/Teeth3DS/vl3d/out/DL_SFLNETPP_X/T1/pipe/SFLNET.pipe"

},

{

"writer": "ClassifiedPcloudWriter",

"out_pcloud": "*predicted.las"

},

{

"eval": "ClassificationEvaluator",

"class_names": [

"mouth",

"jaw31", "jaw32", "jaw33", "jaw34", "jaw35", "jaw36", "jaw37", "jaw38",

"jaw41", "jaw42", "jaw43", "jaw44", "jaw45", "jaw46", "jaw47", "jaw48"

],

"metrics": ["OA", "P", "R", "F1", "IoU", "wP", "wR", "wF1", "wIoU", "MCC", "Kappa"],

"class_metrics": ["P", "R", "F1", "IoU"],

"report_path": "*report/global_eval.log",

"class_report_path": "*report/class_eval.log",

"_confusion_matrix_report_path" : "*report/confusion_matrix.log",

"_confusion_matrix_plot_path" : "*plot/confusion_matrix.svg",

"class_distribution_report_path": "*report/class_distribution.log",

"class_distribution_plot_path": "*plot/class_distribution.svg"

},

{

"eval": "ClassificationUncertaintyEvaluator",

"class_names": [

"mouth",

"jaw31", "jaw32", "jaw33", "jaw34", "jaw35", "jaw36", "jaw37", "jaw38",

"jaw41", "jaw42", "jaw43", "jaw44", "jaw45", "jaw46", "jaw47", "jaw48"

],

"include_probabilities": true,

"include_weighted_entropy": false,

"include_clusters": false,

"weight_by_predictions": false,

"num_clusters": 0,

"clustering_max_iters": 0,

"clustering_batch_size": 0,

"clustering_entropy_weights": false,

"clustering_reduce_function": null,

"gaussian_kernel_points": 256,

"report_path": "*uncertainty/uncertainty.las",

"plot_path": "*uncertainty/"

}

]

}

Quantification

The table below shows the evaluation metrics for each validation point cloud.

PCLOUD |

OA |

P |

R |

F1 |

IoU |

wP |

wR |

wF1 |

wIoU |

MCC |

Kappa |

|---|---|---|---|---|---|---|---|---|---|---|---|

YTMRIXFD |

96.989 |

96.685 |

97.587 |

97.123 |

94.436 |

97.008 |

96.989 |

96.99 |

94.18 |

96.438 |

96.436 |

01E84NTX |

96.358 |

95.567 |

97.245 |

96.324 |

92.993 |

96.466 |

96.358 |

96.374 |

93.053 |

95.743 |

95.735 |

Y9WQHQMT |

91.877 |

91.591 |

91.212 |

91.227 |

84.095 |

92.13 |

91.877 |

91.887 |

85.164 |

90.611 |

90.595 |

API3O9JV |

95.212 |

94.692 |

94.055 |

94.188 |

89.713 |

95.302 |

95.212 |

95.155 |

91.161 |

94.487 |

94.476 |

YCJO4386 |

96.89 |

95.693 |

96.521 |

96.074 |

92.486 |

96.935 |

96.89 |

96.897 |

94.004 |

96.025 |

96.023 |

VR3C4L0M |

96.855 |

95.078 |

95.609 |

95.243 |

91.097 |

96.933 |

96.855 |

96.849 |

93.975 |

95.508 |

95.503 |

016A053T |

96.234 |

96.274 |

95.992 |

96.12 |

92.587 |

96.255 |

96.234 |

96.236 |

92.781 |

95.51 |

95.509 |

YV4OEIZ5 |

96.875 |

95.992 |

97.653 |

96.781 |

93.834 |

96.95 |

96.875 |

96.888 |

94 |

96.162 |

96.154 |

01ADUNMV |

94.501 |

94.307 |

95.17 |

94.519 |

89.914 |

94.974 |

94.501 |

94.607 |

89.978 |

93.555 |

93.536 |

V9CAFAV4 |

96.836 |

96.142 |

97.284 |

96.701 |

93.643 |

96.861 |

96.836 |

96.84 |

93.891 |

96.19 |

96.186 |

XNEIPJH8 |

94.672 |

92.599 |

91.37 |

91.422 |

85.631 |

94.899 |

94.672 |

94.569 |

90.363 |

93.057 |

93.044 |

S5VIQ478 |

94.122 |

83.831 |

82.178 |

82.958 |

79.685 |

96.024 |

94.122 |

95.036 |

90.709 |

93.112 |

93.086 |

019PEUMN |

95.114 |

96.29 |

96.064 |

96.022 |

92.547 |

95.256 |

95.114 |

95.003 |

90.717 |

94.358 |

94.325 |

XKTTBEE0 |

96.678 |

96.381 |

96.552 |

96.447 |

93.17 |

96.713 |

96.678 |

96.684 |

93.599 |

96.044 |

96.043 |

VD3KNUMV |

96.135 |

95.629 |

95.488 |

95.529 |

91.498 |

96.176 |

96.135 |

96.135 |

92.59 |

95.175 |

95.171 |

55EXF0WK |

96.08 |

96.066 |

96.466 |

96.232 |

92.802 |

96.149 |

96.08 |

96.095 |

92.532 |

95.22 |

95.218 |

CVTHSBS5 |

96.617 |

96.735 |

95.97 |

96.319 |

92.952 |

96.663 |

96.617 |

96.619 |

93.494 |

95.955 |

95.951 |

51MXL2ZA |

97.221 |

96.765 |

97.318 |

97.019 |

94.235 |

97.253 |

97.221 |

97.225 |

94.614 |

96.655 |

96.654 |

8WZSZBYG |

97.352 |

96.659 |

96.988 |

96.807 |

93.844 |

97.373 |

97.352 |

97.355 |

94.865 |

96.627 |

96.626 |

C6C00RHE |

95.882 |

90.332 |

90.253 |

90.278 |

87.442 |

96.855 |

95.882 |

96.351 |

93.006 |

95.201 |

95.187 |

013NXP1H |

94.625 |

95.568 |

92.786 |

93.223 |

88.741 |

94.799 |

94.625 |

94.319 |

89.901 |

93.845 |

93.82 |

V68KILV2 |

95.001 |

92.905 |

95.786 |

94.237 |

89.397 |

95.263 |

95.001 |

95.061 |

90.75 |

93.897 |

93.869 |

R544MS3L |

96.58 |

84.505 |

84.273 |

84.377 |

82.212 |

97.383 |

96.58 |

96.97 |

94.136 |

95.952 |

95.946 |

58M9IXQ2 |

96.581 |

96.453 |

97.266 |

96.845 |

93.906 |

96.593 |

96.581 |

96.574 |

93.408 |

96.118 |

96.114 |

ZM8PCSK6 |

96.125 |

90.776 |

89.281 |

89.952 |

86.548 |

96.204 |

96.125 |

96.089 |

92.556 |

95.012 |

94.986 |

013JX8W4 |

96.807 |

89.747 |

90.67 |

90.186 |

87.686 |

97.17 |

96.807 |

96.97 |

94.137 |

96.103 |

96.094 |

SZQ66Y5A |

85.34 |

67.526 |

60.386 |

61.862 |

58.114 |

88.197 |

85.34 |

85.653 |

81.66 |

81.58 |

81.439 |

7IKF2TIW |

95.063 |

87.258 |

88.071 |

87.427 |

82.757 |

95.251 |

95.063 |

95.022 |

90.794 |

93.949 |

93.922 |

SAIQAN8Y |

92.615 |

88.715 |

88.879 |

88.132 |

82.023 |

92.905 |

92.615 |

92.44 |

87.721 |

91.081 |

91.041 |

6BWQC0CT |

96.137 |

95.43 |

96.928 |

96.133 |

92.598 |

96.213 |

96.137 |

96.144 |

92.603 |

95.355 |

95.347 |

UJPJ175B |

96.557 |

96.36 |

96.962 |

96.639 |

93.59 |

96.6 |

96.557 |

96.566 |

93.409 |

95.944 |

95.943 |

X9OQZ131 |

97.054 |

96.675 |

96.343 |

96.477 |

93.234 |

97.084 |

97.054 |

97.052 |

94.299 |

96.19 |

96.187 |

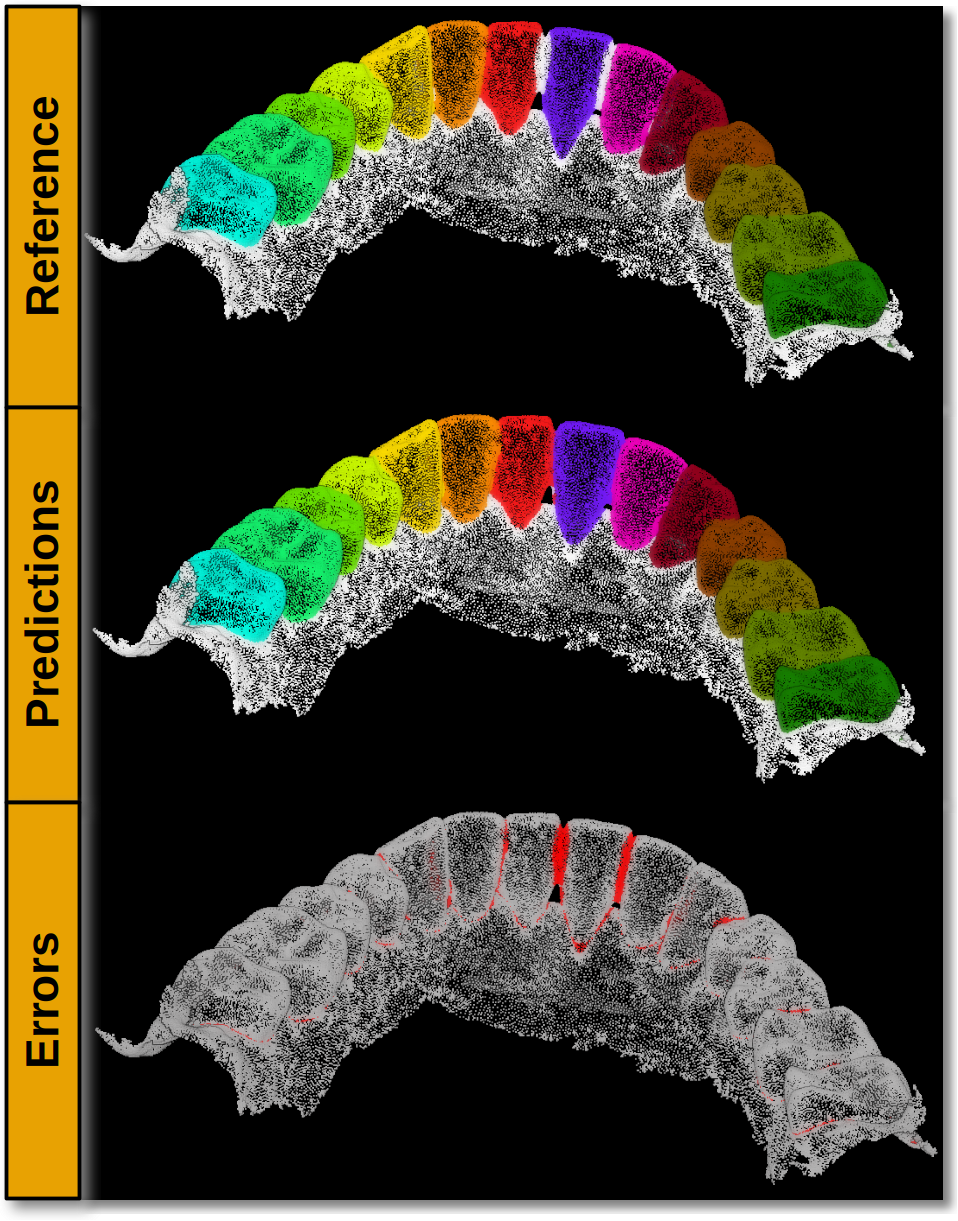

Visualization

The figure below shows the results of the model on validation data never seen before.

Visualization of the reference, predictions, and errors on a validation point cloud never seen before. Each class is represented with a different color.

Application

This example has two main applications:

Baseline model for teeth-wise segmentation of 3D point clouds with deep learning.

Explore how to apply deep learning models for 3D point clouds derived from mesh vertices. In this case, the 3D point clouds are composed by the vertices encoded in a Wavefront OBJ file.